Introduction

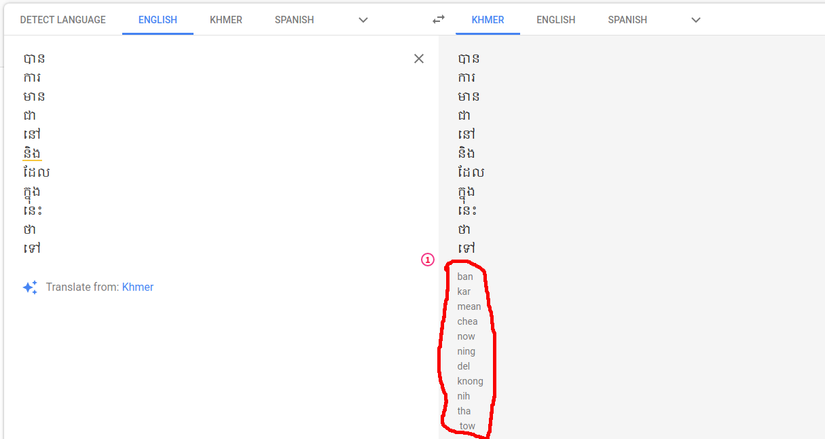

In our previous article, we implemented and application to convert Khmer word into Roman by writing the logic from scratch following given paper since we didn’t have enough data to apply deep learning for this problem. However, we notice that in googles translation, they also convert Khmer word into Roman. Therefore, we can easily use our Khmer words list in our previous article to get list of its Romanization. Then we can use these data to train our model for converting Khmer word to roman.

Plan of attack

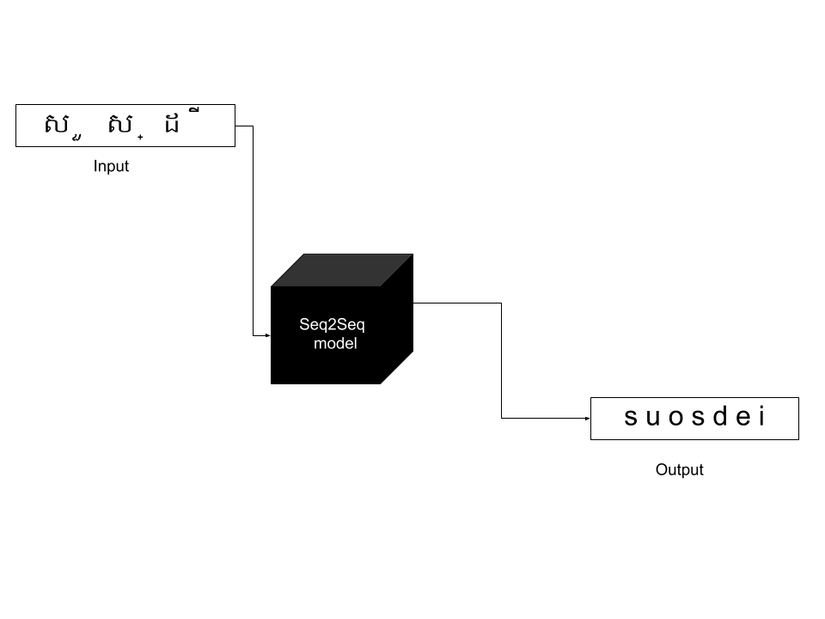

There are many machines learning algorithms that we could use to solve our problem. Since, our problem is implementing a model to translate Khmer word to Roman, one particulate algorithm is very standout to this. It’s Seq2Seq architecture. A Seq2Seq model is a model that takes a sequence of input (words, letters, time series, etc) and outputs another sequence of result. This model has achieved a lot of success in tasks like machine translation, text summarization, and image captioning. Google Translate started using such a model in production in late 2016. Moreover, we had also used this model for implementing our article about chatbot.

Implementation

For this experiment, we are using Keras for development our Seq2Seq model. Luckily, Keras also has a tutorial about build a model for translating English to French. We will modify those code to translate Khmer word to Roman instead. If there any lack of understand my code, you can go check the original code for more explaination here.

First, we import packages needed:

1 2 3 4 5 6 | <span class="token keyword">import</span> numpy <span class="token keyword">as</span> np <span class="token keyword">import</span> pandas <span class="token keyword">as</span> pd <span class="token keyword">from</span> __future__ <span class="token keyword">import</span> print_function <span class="token keyword">from</span> keras<span class="token punctuation">.</span>models <span class="token keyword">import</span> Model <span class="token keyword">from</span> keras<span class="token punctuation">.</span>layers <span class="token keyword">import</span> Input<span class="token punctuation">,</span> LSTM<span class="token punctuation">,</span> Dense |

Then we load the data into memory using panda:

1 2 3 4 5 6 7 8 9 10 11 12 | data_kh <span class="token operator">=</span> pd<span class="token punctuation">.</span>read_csv<span class="token punctuation">(</span><span class="token string">"data/data_kh.csv"</span><span class="token punctuation">,</span> header<span class="token operator">=</span><span class="token boolean">None</span><span class="token punctuation">)</span> data_rom <span class="token operator">=</span> pd<span class="token punctuation">.</span>read_csv<span class="token punctuation">(</span><span class="token string">"data/data_rom.csv"</span><span class="token punctuation">,</span> header<span class="token operator">=</span><span class="token boolean">None</span><span class="token punctuation">)</span> batch_size <span class="token operator">=</span> <span class="token number">32</span> <span class="token comment"># Batch size for training.</span> epochs <span class="token operator">=</span> <span class="token number">100</span> <span class="token comment"># Number of epochs to train for.</span> latent_dim <span class="token operator">=</span> <span class="token number">256</span> <span class="token comment"># Latent dimensionality of the encoding space.</span> num_samples <span class="token operator">=</span> <span class="token number">7154</span> <span class="token comment"># Number of samples to train on.</span> <span class="token comment"># Vectorize the data.</span> input_texts <span class="token operator">=</span> <span class="token punctuation">[</span><span class="token punctuation">]</span> target_texts <span class="token operator">=</span> <span class="token punctuation">[</span><span class="token punctuation">]</span> input_characters <span class="token operator">=</span> <span class="token builtin">set</span><span class="token punctuation">(</span><span class="token punctuation">)</span> target_characters <span class="token operator">=</span> <span class="token builtin">set</span><span class="token punctuation">(</span><span class="token punctuation">)</span> |

Once data is loaded, we need to clear them and separeate it into unique individual character:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | <span class="token keyword">for</span> input_text <span class="token keyword">in</span> data_kh<span class="token punctuation">[</span><span class="token number">0</span><span class="token punctuation">]</span><span class="token punctuation">:</span> input_text <span class="token operator">=</span> <span class="token builtin">str</span><span class="token punctuation">(</span>input_text<span class="token punctuation">)</span><span class="token punctuation">.</span>strip<span class="token punctuation">(</span><span class="token punctuation">)</span> input_texts<span class="token punctuation">.</span>append<span class="token punctuation">(</span>input_text<span class="token punctuation">)</span> <span class="token keyword">for</span> char <span class="token keyword">in</span> input_text<span class="token punctuation">:</span> <span class="token keyword">if</span> char <span class="token operator">not</span> <span class="token keyword">in</span> input_characters<span class="token punctuation">:</span> input_characters<span class="token punctuation">.</span>add<span class="token punctuation">(</span>char<span class="token punctuation">)</span> <span class="token keyword">for</span> target_text <span class="token keyword">in</span> data_rom<span class="token punctuation">[</span><span class="token number">0</span><span class="token punctuation">]</span><span class="token punctuation">:</span> target_text <span class="token operator">=</span> <span class="token string">'t'</span> <span class="token operator">+</span> <span class="token builtin">str</span><span class="token punctuation">(</span>target_text<span class="token punctuation">)</span><span class="token punctuation">.</span>strip<span class="token punctuation">(</span><span class="token punctuation">)</span> <span class="token operator">+</span> <span class="token string">'n'</span> target_texts<span class="token punctuation">.</span>append<span class="token punctuation">(</span>target_text<span class="token punctuation">)</span> <span class="token keyword">for</span> char <span class="token keyword">in</span> <span class="token builtin">str</span><span class="token punctuation">(</span>target_text<span class="token punctuation">)</span><span class="token punctuation">:</span> <span class="token keyword">if</span> char <span class="token operator">not</span> <span class="token keyword">in</span> target_characters<span class="token punctuation">:</span> target_characters<span class="token punctuation">.</span>add<span class="token punctuation">(</span>char<span class="token punctuation">)</span> num_encoder_tokens <span class="token operator">=</span> <span class="token builtin">len</span><span class="token punctuation">(</span>input_characters<span class="token punctuation">)</span> num_decoder_tokens <span class="token operator">=</span> <span class="token builtin">len</span><span class="token punctuation">(</span>target_characters<span class="token punctuation">)</span> max_encoder_seq_length <span class="token operator">=</span> <span class="token builtin">max</span><span class="token punctuation">(</span><span class="token punctuation">[</span><span class="token builtin">len</span><span class="token punctuation">(</span>txt<span class="token punctuation">)</span> <span class="token keyword">for</span> txt <span class="token keyword">in</span> input_texts<span class="token punctuation">]</span><span class="token punctuation">)</span> max_decoder_seq_length <span class="token operator">=</span> <span class="token builtin">max</span><span class="token punctuation">(</span><span class="token punctuation">[</span><span class="token builtin">len</span><span class="token punctuation">(</span>txt<span class="token punctuation">)</span> <span class="token keyword">for</span> txt <span class="token keyword">in</span> target_texts<span class="token punctuation">]</span><span class="token punctuation">)</span> |

Next, we init array for input and output sequences base on max length of input and output sample data.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | input_token_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">[</span><span class="token punctuation">(</span>char<span class="token punctuation">,</span> i<span class="token punctuation">)</span> <span class="token keyword">for</span> i<span class="token punctuation">,</span> char <span class="token keyword">in</span> <span class="token builtin">enumerate</span><span class="token punctuation">(</span>input_characters<span class="token punctuation">)</span><span class="token punctuation">]</span><span class="token punctuation">)</span> target_token_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">[</span><span class="token punctuation">(</span>char<span class="token punctuation">,</span> i<span class="token punctuation">)</span> <span class="token keyword">for</span> i<span class="token punctuation">,</span> char <span class="token keyword">in</span> <span class="token builtin">enumerate</span><span class="token punctuation">(</span>target_characters<span class="token punctuation">)</span><span class="token punctuation">]</span><span class="token punctuation">)</span> encoder_input_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_encoder_seq_length<span class="token punctuation">,</span> num_encoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> decoder_input_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_decoder_seq_length<span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> decoder_target_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_decoder_seq_length<span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> |

Then, we encode/decode our input and out data before pass it into our model:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | input_token_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">[</span><span class="token punctuation">(</span>char<span class="token punctuation">,</span> i<span class="token punctuation">)</span> <span class="token keyword">for</span> i<span class="token punctuation">,</span> char <span class="token keyword">in</span> <span class="token builtin">enumerate</span><span class="token punctuation">(</span>input_characters<span class="token punctuation">)</span><span class="token punctuation">]</span><span class="token punctuation">)</span> target_token_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">[</span><span class="token punctuation">(</span>char<span class="token punctuation">,</span> i<span class="token punctuation">)</span> <span class="token keyword">for</span> i<span class="token punctuation">,</span> char <span class="token keyword">in</span> <span class="token builtin">enumerate</span><span class="token punctuation">(</span>target_characters<span class="token punctuation">)</span><span class="token punctuation">]</span><span class="token punctuation">)</span> encoder_input_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_encoder_seq_length<span class="token punctuation">,</span> num_encoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> decoder_input_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_decoder_seq_length<span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> decoder_target_data <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span> <span class="token punctuation">(</span><span class="token builtin">len</span><span class="token punctuation">(</span>input_texts<span class="token punctuation">)</span><span class="token punctuation">,</span> max_decoder_seq_length<span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">,</span> dtype<span class="token operator">=</span><span class="token string">'float32'</span><span class="token punctuation">)</span> |

Using Keras we can build a seq2seq with ease:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | <span class="token comment"># Define an input sequence and process it.</span> encoder_inputs <span class="token operator">=</span> Input<span class="token punctuation">(</span>shape<span class="token operator">=</span><span class="token punctuation">(</span><span class="token boolean">None</span><span class="token punctuation">,</span> num_encoder_tokens<span class="token punctuation">)</span><span class="token punctuation">)</span> encoder <span class="token operator">=</span> LSTM<span class="token punctuation">(</span>latent_dim<span class="token punctuation">,</span> return_state<span class="token operator">=</span><span class="token boolean">True</span><span class="token punctuation">)</span> encoder_outputs<span class="token punctuation">,</span> state_h<span class="token punctuation">,</span> state_c <span class="token operator">=</span> encoder<span class="token punctuation">(</span>encoder_inputs<span class="token punctuation">)</span> <span class="token comment"># We discard `encoder_outputs` and only keep the states.</span> encoder_states <span class="token operator">=</span> <span class="token punctuation">[</span>state_h<span class="token punctuation">,</span> state_c<span class="token punctuation">]</span> <span class="token comment"># Set up the decoder, using `encoder_states` as initial state.</span> decoder_inputs <span class="token operator">=</span> Input<span class="token punctuation">(</span>shape<span class="token operator">=</span><span class="token punctuation">(</span><span class="token boolean">None</span><span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># We set up our decoder to return full output sequences,</span> <span class="token comment"># and to return internal states as well. We don't use the</span> <span class="token comment"># return states in the training model, but we will use them in inference.</span> decoder_lstm <span class="token operator">=</span> LSTM<span class="token punctuation">(</span>latent_dim<span class="token punctuation">,</span> return_sequences<span class="token operator">=</span><span class="token boolean">True</span><span class="token punctuation">,</span> return_state<span class="token operator">=</span><span class="token boolean">True</span><span class="token punctuation">)</span> decoder_outputs<span class="token punctuation">,</span> _<span class="token punctuation">,</span> _ <span class="token operator">=</span> decoder_lstm<span class="token punctuation">(</span>decoder_inputs<span class="token punctuation">,</span> initial_state<span class="token operator">=</span>encoder_states<span class="token punctuation">)</span> decoder_dense <span class="token operator">=</span> Dense<span class="token punctuation">(</span>num_decoder_tokens<span class="token punctuation">,</span> activation<span class="token operator">=</span><span class="token string">'softmax'</span><span class="token punctuation">)</span> decoder_outputs <span class="token operator">=</span> decoder_dense<span class="token punctuation">(</span>decoder_outputs<span class="token punctuation">)</span> <span class="token comment"># Define the model that will turn</span> <span class="token comment"># `encoder_input_data` & `decoder_input_data` into `decoder_target_data`</span> model <span class="token operator">=</span> Model<span class="token punctuation">(</span><span class="token punctuation">[</span>encoder_inputs<span class="token punctuation">,</span> decoder_inputs<span class="token punctuation">]</span><span class="token punctuation">,</span> decoder_outputs<span class="token punctuation">)</span> <span class="token comment"># Run training</span> model<span class="token punctuation">.</span><span class="token builtin">compile</span><span class="token punctuation">(</span>optimizer<span class="token operator">=</span><span class="token string">'rmsprop'</span><span class="token punctuation">,</span> loss<span class="token operator">=</span><span class="token string">'categorical_crossentropy'</span><span class="token punctuation">,</span> metrics<span class="token operator">=</span><span class="token punctuation">[</span><span class="token string">'accuracy'</span><span class="token punctuation">]</span><span class="token punctuation">)</span> |

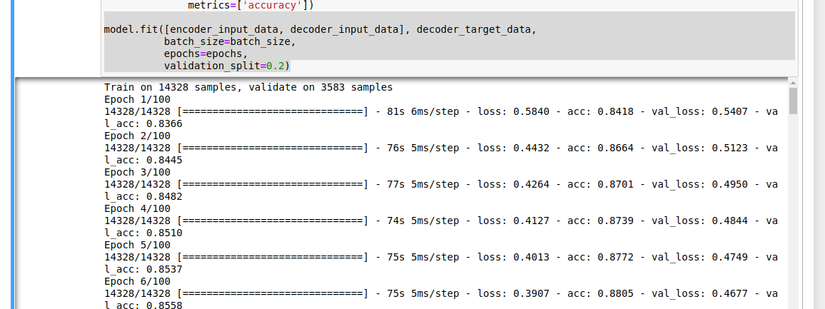

Then, we can start train our model:

1 2 3 4 5 6 | model<span class="token punctuation">.</span>fit<span class="token punctuation">(</span><span class="token punctuation">[</span>encoder_input_data<span class="token punctuation">,</span> decoder_input_data<span class="token punctuation">]</span><span class="token punctuation">,</span> decoder_target_data<span class="token punctuation">,</span> batch_size<span class="token operator">=</span>batch_size<span class="token punctuation">,</span> epochs<span class="token operator">=</span>epochs<span class="token punctuation">,</span> validation_split<span class="token operator">=</span><span class="token number">0.2</span><span class="token punctuation">)</span> |

And don’t forget to save our trained model if you don’t to re-trin it again:

1 2 3 4 5 6 7 | <span class="token comment"># Save model</span> <span class="token comment"># serialize model to JSON</span> model_json <span class="token operator">=</span> model<span class="token punctuation">.</span>to_json<span class="token punctuation">(</span><span class="token punctuation">)</span> <span class="token keyword">with</span> <span class="token builtin">open</span><span class="token punctuation">(</span><span class="token string">"model.json"</span><span class="token punctuation">,</span> <span class="token string">"w"</span><span class="token punctuation">)</span> <span class="token keyword">as</span> json_file<span class="token punctuation">:</span> json_file<span class="token punctuation">.</span>write<span class="token punctuation">(</span>model_json<span class="token punctuation">)</span> model<span class="token punctuation">.</span>save<span class="token punctuation">(</span><span class="token string">'s2s.h5'</span><span class="token punctuation">)</span> |

Testing

Once training is complete, we now can test our model and check the result:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 | encoder_model <span class="token operator">=</span> Model<span class="token punctuation">(</span>encoder_inputs<span class="token punctuation">,</span> encoder_states<span class="token punctuation">)</span> decoder_state_input_h <span class="token operator">=</span> Input<span class="token punctuation">(</span>shape<span class="token operator">=</span><span class="token punctuation">(</span>latent_dim<span class="token punctuation">,</span><span class="token punctuation">)</span><span class="token punctuation">)</span> decoder_state_input_c <span class="token operator">=</span> Input<span class="token punctuation">(</span>shape<span class="token operator">=</span><span class="token punctuation">(</span>latent_dim<span class="token punctuation">,</span><span class="token punctuation">)</span><span class="token punctuation">)</span> decoder_states_inputs <span class="token operator">=</span> <span class="token punctuation">[</span>decoder_state_input_h<span class="token punctuation">,</span> decoder_state_input_c<span class="token punctuation">]</span> decoder_outputs<span class="token punctuation">,</span> state_h<span class="token punctuation">,</span> state_c <span class="token operator">=</span> decoder_lstm<span class="token punctuation">(</span> decoder_inputs<span class="token punctuation">,</span> initial_state<span class="token operator">=</span>decoder_states_inputs<span class="token punctuation">)</span> decoder_states <span class="token operator">=</span> <span class="token punctuation">[</span>state_h<span class="token punctuation">,</span> state_c<span class="token punctuation">]</span> decoder_outputs <span class="token operator">=</span> decoder_dense<span class="token punctuation">(</span>decoder_outputs<span class="token punctuation">)</span> decoder_model <span class="token operator">=</span> Model<span class="token punctuation">(</span> <span class="token punctuation">[</span>decoder_inputs<span class="token punctuation">]</span> <span class="token operator">+</span> decoder_states_inputs<span class="token punctuation">,</span> <span class="token punctuation">[</span>decoder_outputs<span class="token punctuation">]</span> <span class="token operator">+</span> decoder_states<span class="token punctuation">)</span> reverse_input_char_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">(</span>i<span class="token punctuation">,</span> char<span class="token punctuation">)</span> <span class="token keyword">for</span> char<span class="token punctuation">,</span> i <span class="token keyword">in</span> input_token_index<span class="token punctuation">.</span>items<span class="token punctuation">(</span><span class="token punctuation">)</span><span class="token punctuation">)</span> reverse_target_char_index <span class="token operator">=</span> <span class="token builtin">dict</span><span class="token punctuation">(</span> <span class="token punctuation">(</span>i<span class="token punctuation">,</span> char<span class="token punctuation">)</span> <span class="token keyword">for</span> char<span class="token punctuation">,</span> i <span class="token keyword">in</span> target_token_index<span class="token punctuation">.</span>items<span class="token punctuation">(</span><span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token keyword">def</span> <span class="token function">decode_sequence</span><span class="token punctuation">(</span>input_seq<span class="token punctuation">)</span><span class="token punctuation">:</span> <span class="token comment"># Encode the input as state vectors.</span> states_value <span class="token operator">=</span> encoder_model<span class="token punctuation">.</span>predict<span class="token punctuation">(</span>input_seq<span class="token punctuation">)</span> <span class="token comment"># Generate empty target sequence of length 1.</span> target_seq <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span><span class="token punctuation">(</span><span class="token number">1</span><span class="token punctuation">,</span> <span class="token number">1</span><span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">)</span> <span class="token comment"># Populate the first character of target sequence with the start character.</span> target_seq<span class="token punctuation">[</span><span class="token number">0</span><span class="token punctuation">,</span> <span class="token number">0</span><span class="token punctuation">,</span> target_token_index<span class="token punctuation">[</span><span class="token string">'t'</span><span class="token punctuation">]</span><span class="token punctuation">]</span> <span class="token operator">=</span> <span class="token number">1</span><span class="token punctuation">.</span> <span class="token comment"># Sampling loop for a batch of sequences</span> <span class="token comment"># (to simplify, here we assume a batch of size 1).</span> stop_condition <span class="token operator">=</span> <span class="token boolean">False</span> decoded_sentence <span class="token operator">=</span> <span class="token string">''</span> <span class="token keyword">while</span> <span class="token operator">not</span> stop_condition<span class="token punctuation">:</span> output_tokens<span class="token punctuation">,</span> h<span class="token punctuation">,</span> c <span class="token operator">=</span> decoder_model<span class="token punctuation">.</span>predict<span class="token punctuation">(</span> <span class="token punctuation">[</span>target_seq<span class="token punctuation">]</span> <span class="token operator">+</span> states_value<span class="token punctuation">)</span> <span class="token comment"># Sample a token</span> sampled_token_index <span class="token operator">=</span> np<span class="token punctuation">.</span>argmax<span class="token punctuation">(</span>output_tokens<span class="token punctuation">[</span><span class="token number">0</span><span class="token punctuation">,</span> <span class="token operator">-</span><span class="token number">1</span><span class="token punctuation">,</span> <span class="token punctuation">:</span><span class="token punctuation">]</span><span class="token punctuation">)</span> sampled_char <span class="token operator">=</span> reverse_target_char_index<span class="token punctuation">[</span>sampled_token_index<span class="token punctuation">]</span> decoded_sentence <span class="token operator">+=</span> sampled_char <span class="token comment"># Exit condition: either hit max length</span> <span class="token comment"># or find stop character.</span> <span class="token keyword">if</span> <span class="token punctuation">(</span>sampled_char <span class="token operator">==</span> <span class="token string">'n'</span> <span class="token operator">or</span> <span class="token builtin">len</span><span class="token punctuation">(</span>decoded_sentence<span class="token punctuation">)</span> <span class="token operator">></span> max_decoder_seq_length<span class="token punctuation">)</span><span class="token punctuation">:</span> stop_condition <span class="token operator">=</span> <span class="token boolean">True</span> <span class="token comment"># Update the target sequence (of length 1).</span> target_seq <span class="token operator">=</span> np<span class="token punctuation">.</span>zeros<span class="token punctuation">(</span><span class="token punctuation">(</span><span class="token number">1</span><span class="token punctuation">,</span> <span class="token number">1</span><span class="token punctuation">,</span> num_decoder_tokens<span class="token punctuation">)</span><span class="token punctuation">)</span> target_seq<span class="token punctuation">[</span><span class="token number">0</span><span class="token punctuation">,</span> <span class="token number">0</span><span class="token punctuation">,</span> sampled_token_index<span class="token punctuation">]</span> <span class="token operator">=</span> <span class="token number">1</span><span class="token punctuation">.</span> <span class="token comment"># Update states</span> states_value <span class="token operator">=</span> <span class="token punctuation">[</span>h<span class="token punctuation">,</span> c<span class="token punctuation">]</span> <span class="token keyword">return</span> decoded_sentence <span class="token keyword">for</span> seq_index <span class="token keyword">in</span> <span class="token builtin">range</span><span class="token punctuation">(</span><span class="token number">100</span><span class="token punctuation">)</span><span class="token punctuation">:</span> <span class="token comment"># Take one sequence (part of the training set)</span> <span class="token comment"># for trying out decoding.</span> input_seq <span class="token operator">=</span> encoder_input_data<span class="token punctuation">[</span>seq_index<span class="token punctuation">:</span> seq_index <span class="token operator">+</span> <span class="token number">1</span><span class="token punctuation">]</span> decoded_sentence <span class="token operator">=</span> decode_sequence<span class="token punctuation">(</span>input_seq<span class="token punctuation">)</span> <span class="token keyword">print</span><span class="token punctuation">(</span><span class="token string">'KH: '</span> <span class="token operator">+</span> input_texts<span class="token punctuation">[</span>seq_index<span class="token punctuation">]</span> <span class="token operator">+</span> <span class="token string">', Roman: '</span> <span class="token operator">+</span> target_texts<span class="token punctuation">[</span>seq_index<span class="token punctuation">]</span> <span class="token operator">+</span> <span class="token string">', predicted: '</span> <span class="token operator">+</span> decoded_sentence<span class="token punctuation">)</span> |

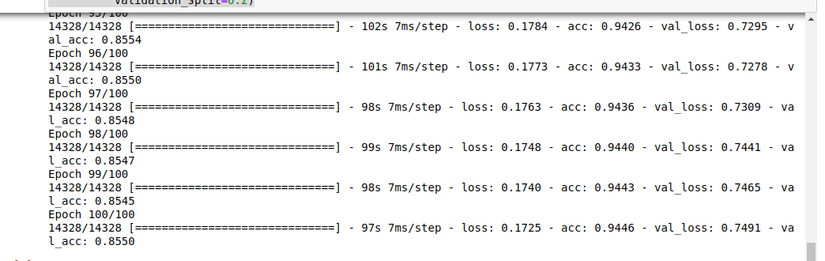

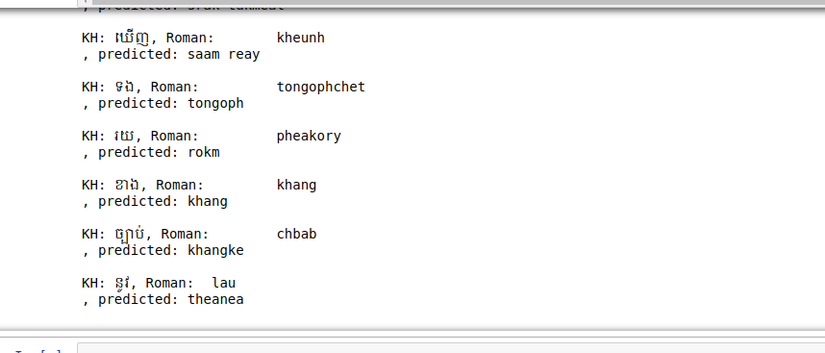

Let’s run it.

Base on the result, it seems our model is over fited. So, it your turn to improve this model to make it more awesome.

Resources

- Source code

- https://keras.io/examples/lstm_seq2seq/

- https://github.com/udacity/deep-learning-v2-pytorch/blob/master/recurrent-neural-networks/char-rnn/Character_Level_RNN_Solution.ipynb

- https://karpathy.github.io/2015/05/21/rnn-effectiveness/

- https://towardsdatascience.com/day-1-2-attention-seq2seq-models-65df3f49e263

- https://www.guru99.com/seq2seq-model.html

What’s next?

In article, we learned how to prepare our text data, and we create the model which will take the data we processed and use it to train translating Khmer word to Roman. We used an architecture called (seq2seq) or (Encoder Decoder), It is suitable for solving sequential problem. Where in our case the input sequence is Khmer words and our out put sequence is roman word where its length is different. However, our model is not produce good prediction yet and it’s your turn to improve this model to compete with google.