1. What is Deep Learning

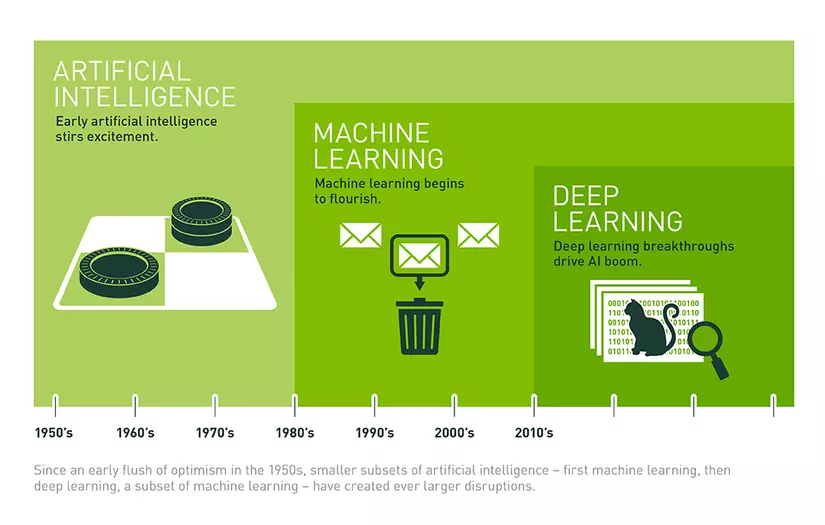

Artificial intelligence is creeping into our lives and influencing us deeply, the phrases “Artificial Intelligence”, “Machine Learing” and “Deep Learning” are no longer strange. Let’s take a look at the figure to describe the relationship between artificial intelligence, machine learning, and deep learning:

Deep learning has been a hotly debated topic in AI. As a small category of machine learning, deep learning focuses on solving problems related to artificial neural networks in order to upgrade technologies such as speech recognition, computer vision and natural language processing. Deep learning is becoming one of the hottest areas in computer science. In just a few years, deep learning has driven progress in a variety of fields such as object perception, machine translation, voice recognition, and so on – issues that used to be very difficult. with artificial intelligence researchers.

To better understand deep learning, let’s look back at some of the basic concepts of artificial intelligence.

Artificial intelligence can be simply understood as being composed of stacked layers, in which the artificial neural network is at the bottom, machine learning is on the next floor and deep learning is on the top floor.

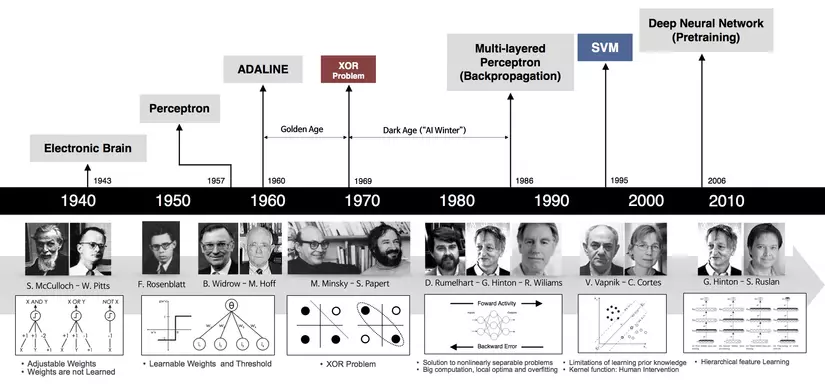

Deep Learning has been mentioned a lot in recent years, but the basic foundation has been around for a long time  Deep learning has been around for a long time, but since 2012, deep learning has made great breakthroughs and a series of deep learning support libraries have been born. Along with that, more and more deep learning architecture was born, making the number of deep learning applications and articles increase dramatically.

Deep learning has been around for a long time, but since 2012, deep learning has made great breakthroughs and a series of deep learning support libraries have been born. Along with that, more and more deep learning architecture was born, making the number of deep learning applications and articles increase dramatically.

2. Introducing Keras

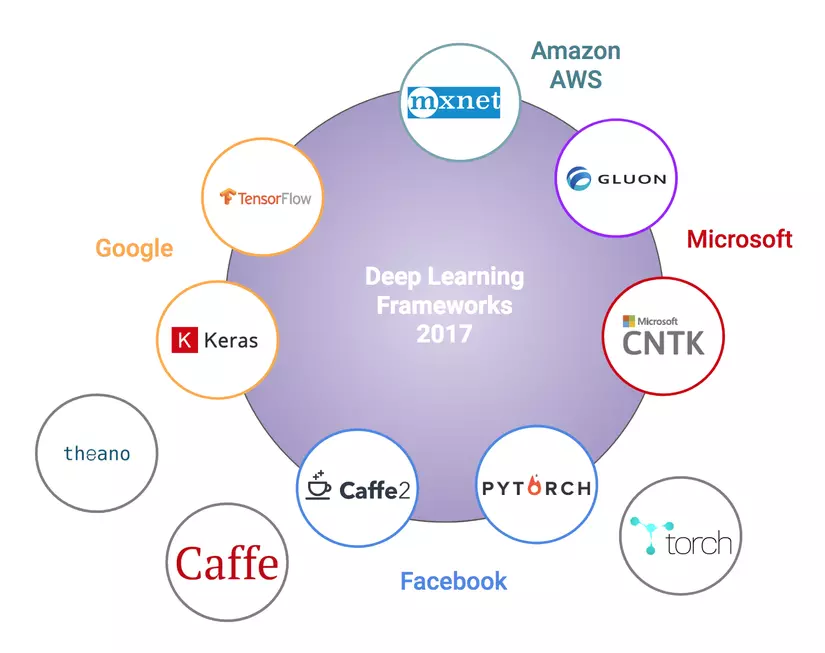

Deep learning libraries are often backed by big tech companies: Google (Keras, TensorFlow), Facebook (Caffe2, Pytorch), Microsoft (CNTK), Amazon (Mxnet), Microsoft and Amazon are also starting to build. built Gluon (similar version to Keras). (These vendors have cloud computing services and want to attract users).

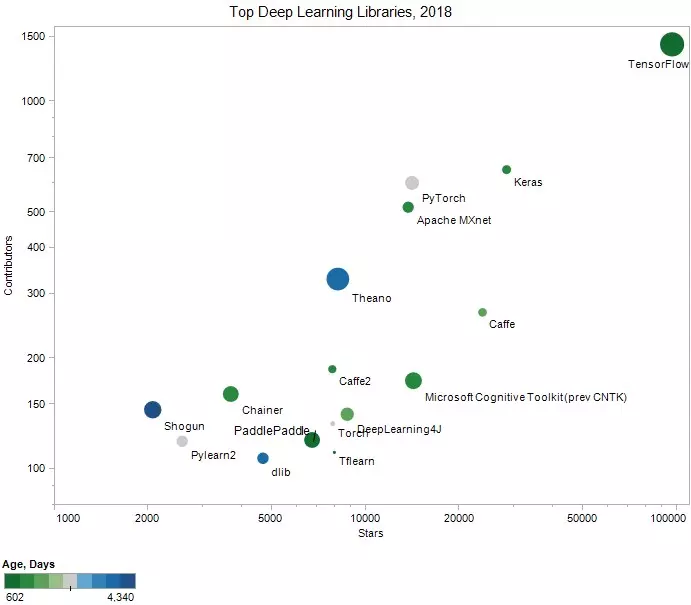

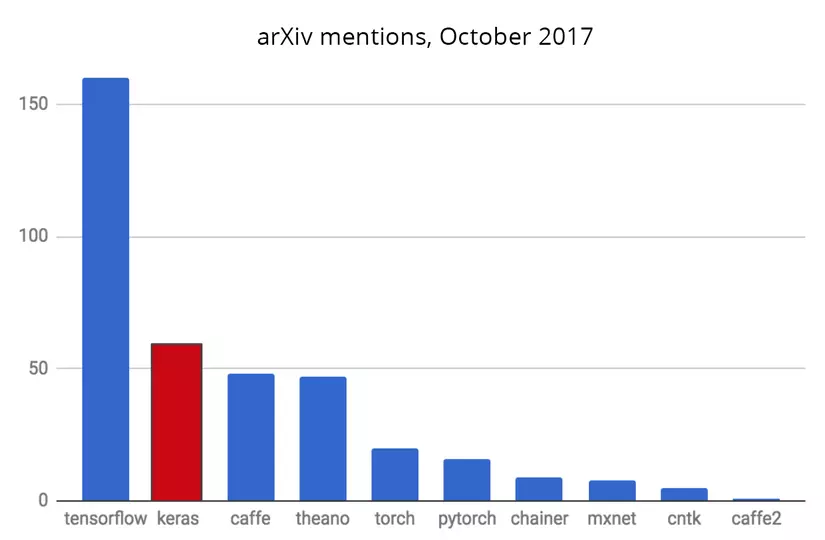

Here are some statistics for people to get an overview of the libraries most used

The number of “stars” on Github Repo, the number of “Contributors” of the libraries

The number of articles on arXiv refers to each library

The above comparisons show that TensorFlow, Keras and Caffe are the most used libraries (recently added PyTorch is very easy to use and is attracting more users).

Keras is considered to be a ‘high-level’ library with a ‘low-level’ section (also known as a backend) that may be TensorFlow, CNTK, or Theano. Keras has a much simpler syntax than TensorFlow. For the purpose of introducing models rather than using deep learning libraries, I will choose Keras and TensorFlow as ‘backend’.

Reasons to use Keras to get started:

- Keras prioritizes the experience of the programmer

- Keras has been widely used in business and research communities

- Keras makes it easy to turn designs into products

- Keras supports training on multiple distributed GPUs

- Keras supports multiple backend engines and does not limit you to an ecosystem

3. Linear regression with Keras

Training a deep learning or neural network model generally involves the following steps:

- Data preparation

- Network construction

- Select an algorithm to update solutions, build losses and model evaluation methods

- Model training.

- Model evaluation

Let’s see Keras perform these steps through the example below.

Let’s make a simple example. The input X data has a dimension of 2, the output y = 2 X [0] + 3 X [1] + 4 + e where e is the noise following a expected normal distribution with 0, the variance is 0.2 .

Here is an example code for training linear regression models using Keras:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | <span class="token keyword">import</span> numpy <span class="token keyword">as</span> np <span class="token keyword">from</span> keras <span class="token punctuation">.</span> models <span class="token keyword">import</span> Sequential <span class="token keyword">from</span> keras <span class="token punctuation">.</span> layers <span class="token punctuation">.</span> core <span class="token keyword">import</span> Dense <span class="token punctuation">,</span> Activation <span class="token keyword">from</span> keras <span class="token keyword">import</span> optimizers <span class="token comment"># 1. create pseudo data y = 2*x0 + 3*x1 + 4</span> X <span class="token operator">=</span> np <span class="token punctuation">.</span> random <span class="token punctuation">.</span> rand <span class="token punctuation">(</span> <span class="token number">100</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> y <span class="token operator">=</span> <span class="token number">2</span> <span class="token operator">*</span> X <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">]</span> <span class="token operator">+</span> <span class="token number">3</span> <span class="token operator">*</span> X <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">]</span> <span class="token operator">+</span> <span class="token number">4</span> <span class="token operator">+</span> <span class="token number">.2</span> <span class="token operator">*</span> np <span class="token punctuation">.</span> random <span class="token punctuation">.</span> randn <span class="token punctuation">(</span> <span class="token number">100</span> <span class="token punctuation">)</span> <span class="token comment"># noise added</span> <span class="token comment"># 2. Build model </span> model <span class="token operator">=</span> Sequential <span class="token punctuation">(</span> <span class="token punctuation">[</span> Dense <span class="token punctuation">(</span> <span class="token number">1</span> <span class="token punctuation">,</span> input_shape <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> activation <span class="token operator">=</span> <span class="token string">'linear'</span> <span class="token punctuation">)</span> <span class="token punctuation">]</span> <span class="token punctuation">)</span> <span class="token comment"># 3. gradient descent optimizer and loss function </span> sgd <span class="token operator">=</span> optimizers <span class="token punctuation">.</span> SGD <span class="token punctuation">(</span> lr <span class="token operator">=</span> <span class="token number">0.1</span> <span class="token punctuation">)</span> model <span class="token punctuation">.</span> <span class="token builtin">compile</span> <span class="token punctuation">(</span> loss <span class="token operator">=</span> <span class="token string">'mse'</span> <span class="token punctuation">,</span> optimizer <span class="token operator">=</span> sgd <span class="token punctuation">)</span> <span class="token comment"># 4. Train the model </span> model <span class="token punctuation">.</span> fit <span class="token punctuation">(</span> X <span class="token punctuation">,</span> y <span class="token punctuation">,</span> epochs <span class="token operator">=</span> <span class="token number">100</span> <span class="token punctuation">,</span> batch_size <span class="token operator">=</span> <span class="token number">2</span> <span class="token punctuation">)</span> |

Result

1 2 3 4 5 6 7 8 9 10 11 12 13 | Epoch 1/100 100/100 [==============================] - 0s 5ms/step - loss: 1.7199 Epoch 2/100 100/100 [==============================] - 0s 709us/step - loss: 0.0388 Epoch 3/100 100/100 [==============================] - 0s 675us/step - loss: 0.0415 Epoch 4/100 100/100 [==============================] - 0s 774us/step - loss: 0.0392 Epoch 5/100 ..... Epoch 100/100 100/100 [==============================] - 0s 823us/step - loss: 0.0393 |

We see that the algorithm converges quite quickly and the MSE loss is quite small after the training is completed.

Explain a bit of code:

create pseudo data

- Sequantial ([<a list>]) is an indication of layers being constructed in the correct order in [<a list>]. The first element of the list represents the connection between the input layer and the next layer, the next element of the list represents the connection of the next layer.

- Dense represents a fully connected layer, ie all units of the previous layer are connected to all units of the current layer. The first value in Dense of 1 indicates that there is only 1 unit in this layer (the output of linear regression in this case is 1). input_shape = (2,) is the size of the input data. This size is a tuple so we need to write in the form (2,). Later, when working with multi-dimensional data, we will have multi-dimensional tuples. For example, if the input is an RGB image of size 224x224x3 pixels then input_shape = (224, 224, 3).

gradient descent optimizer and loss function

- Demonstrate the choice of the method of updating solutions, where we use Stochastic Gradient Descent (SGD) with learning rate lr = 0.1. Other methods of updating solutions can be found at Keras-Usage of optimizers. loss = ‘mse’ is the mean squared error, which is the loss function of linear regression.

After building the model and showing the update method as well as the loss function, we train the model with: # 4

(Keras is quite similar to scikit-learn in that it trains the models using the .fit () method. Here, epochs is the number of epochs and batch_size is the size of a mini-batch.

To see the coefficient found for linear regression, we use:

1 2 | model <span class="token punctuation">.</span> get_weights <span class="token punctuation">(</span> <span class="token punctuation">)</span> |

Result

1 2 3 | <span class="token punctuation">[</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> <span class="token punctuation">[</span> <span class="token number">1.996118</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> <span class="token punctuation">[</span> <span class="token number">3.0239758</span> <span class="token punctuation">]</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> dtype <span class="token operator">=</span> float32 <span class="token punctuation">)</span> <span class="token punctuation">,</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> <span class="token number">3.963116</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> dtype <span class="token operator">=</span> float32 <span class="token punctuation">)</span> <span class="token punctuation">]</span> |

there, the first element of this list is the find factor, the second element is the bias. This result is close to the expected solution of the problem (y = 2 X [0] + 3 X [1] + 4).

4. Conclusion

- Keras is a relatively easy-to-use library for beginners. It provides the necessary functions with simple syntax.

- As we go deeper into deep learning in the following articles, we will gradually become familiar with programming techniques with Keras. Hopefully after the article everyone has understood about Deeplearning and the Keras library.