How do you know if you’re handsome or pretty if you’re not relying on others?

And the answer is AI will do it for you, today I will introduce to you how to build a very interesting and interesting problem called “Beaty Evaluate” . Use deep learning train with the data set SCUT-FBP5500 to get the “ultimate” model to predict how beautiful your beautiful zai is, my code will be at the bottom of the article … Now for now let’s begin now =))

Theory

Scattered about DATASET

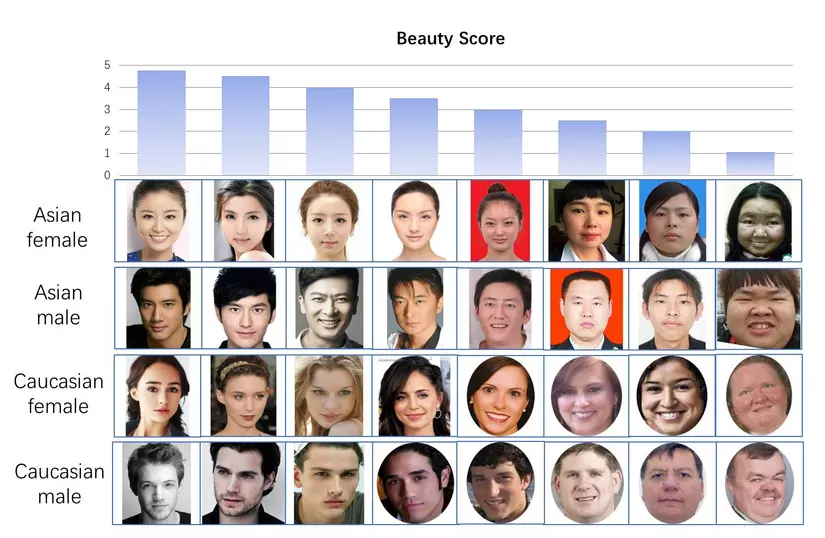

SCUT-FBP5500 data set includes a total of 5500 faces of men, women, Asia, foreigners, old, young … and diverse labels (landmark, beauty score in 5 points scale, distribution of beauty scores ) enable different computational models with different facial beauty prediction models. The dataset can be divided into four subset with different races and genders, including 2000 Asian women (AF), 750 white women (CF) and 750 white men (CM) . Most images of SCUT-FBP5500 are collected from the Internet.

You can read more details on this paper here and download the data set here

Training / Testing set split

In the Dataset download, we have split into two experiments:

- 5-folds cross validation, with each validation 80% samples (4400 images) are used for training and the remaining 20% (1100 images) are used for testing.

- The second test is divided into 60% for training and 40% for testing.

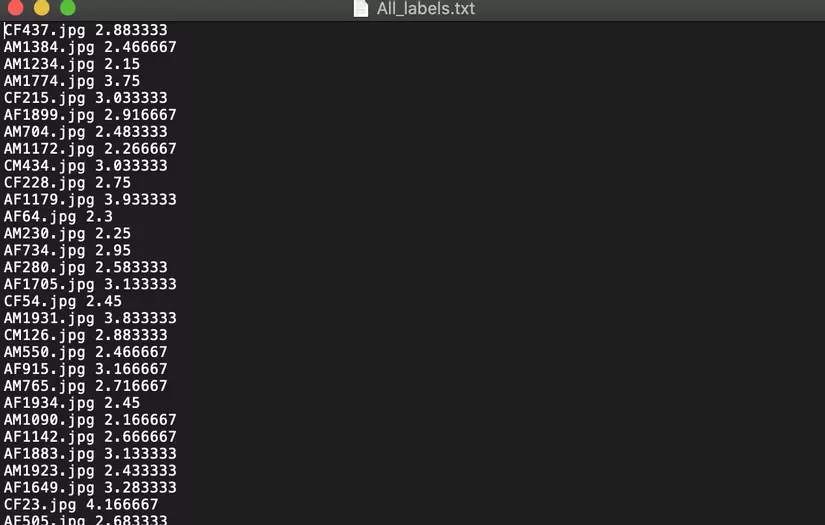

This is the label of the train data set, and as you can see it is labeled with each image + their beauty score next to it.

And here are some images for each respective label:

Practice

Oh, oh hey, the theory is now the part I like to write the most =))

Split data

Use this function to get labels and get only image paths in ALL_labels.txt and remove the score.

1 2 3 4 5 | <span class="token keyword">def</span> <span class="token function">get_labels</span> <span class="token punctuation">(</span> <span class="token builtin">file</span> <span class="token punctuation">)</span> <span class="token punctuation">:</span> <span class="token keyword">with</span> <span class="token builtin">open</span> <span class="token punctuation">(</span> <span class="token builtin">file</span> <span class="token punctuation">,</span> <span class="token string">'r'</span> <span class="token punctuation">)</span> <span class="token keyword">as</span> f <span class="token punctuation">:</span> lines <span class="token operator">=</span> f <span class="token punctuation">.</span> readlines <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token keyword">return</span> np <span class="token punctuation">.</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> line <span class="token punctuation">.</span> strip <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">.</span> split <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token keyword">for</span> line <span class="token keyword">in</span> lines <span class="token punctuation">]</span> <span class="token punctuation">)</span> |

Here I use the split file into 60% training and 40% testing

1 2 3 4 5 | PATH <span class="token operator">=</span> <span class="token string">'./train_test_files/split_of_60%training and 40%testing'</span> PATH_IMAGES <span class="token operator">=</span> <span class="token string">'./Images/'</span> train_labels <span class="token operator">=</span> get_labels <span class="token punctuation">(</span> PATH <span class="token operator">+</span> <span class="token string">'train.txt'</span> <span class="token punctuation">)</span> test_labels <span class="token operator">=</span> get_labels <span class="token punctuation">(</span> PATH <span class="token operator">+</span> <span class="token string">'test.txt'</span> <span class="token punctuation">)</span> |

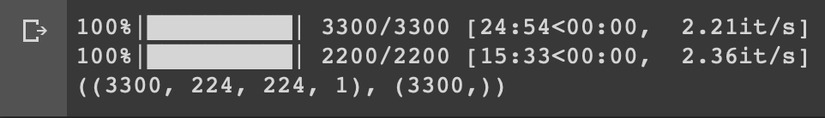

Use cv2.imread to read the input image and save it into RAM as an array. note : I only use this method temporarily because it is convenient: v, but I encourage you that if the code, you should save it as a file to save the train next time rather than performing this step. Also, if you use gg colab you will understand yourself ns: v.

1 2 3 4 5 6 7 8 9 | X_pre_train <span class="token operator">=</span> <span class="token punctuation">[</span> cv2 <span class="token punctuation">.</span> imread <span class="token punctuation">(</span> PATH_IMAGES <span class="token operator">+</span> <span class="token builtin">file</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">)</span> <span class="token keyword">for</span> <span class="token builtin">file</span> <span class="token keyword">in</span> tqdm <span class="token punctuation">(</span> train_labels <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">]</span> <span class="token punctuation">)</span> <span class="token punctuation">]</span> X_train <span class="token operator">=</span> np <span class="token punctuation">.</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> cv2 <span class="token punctuation">.</span> resize <span class="token punctuation">(</span> img <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> interpolation <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> INTER_AREA <span class="token punctuation">)</span> <span class="token keyword">for</span> img <span class="token keyword">in</span> X_pre_train <span class="token punctuation">]</span> <span class="token punctuation">)</span> X_pre_test <span class="token operator">=</span> <span class="token punctuation">[</span> cv2 <span class="token punctuation">.</span> imread <span class="token punctuation">(</span> PATH_IMAGES <span class="token operator">+</span> <span class="token builtin">file</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">)</span> <span class="token keyword">for</span> <span class="token builtin">file</span> <span class="token keyword">in</span> tqdm <span class="token punctuation">(</span> test_labels <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">]</span> <span class="token punctuation">)</span> <span class="token punctuation">]</span> X_test <span class="token operator">=</span> np <span class="token punctuation">.</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> cv2 <span class="token punctuation">.</span> resize <span class="token punctuation">(</span> img <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> interpolation <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> INTER_AREA <span class="token punctuation">)</span> <span class="token keyword">for</span> img <span class="token keyword">in</span> X_pre_test <span class="token punctuation">]</span> <span class="token punctuation">)</span> y_train <span class="token operator">=</span> np <span class="token punctuation">.</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> <span class="token builtin">float</span> <span class="token punctuation">(</span> flo <span class="token punctuation">)</span> <span class="token keyword">for</span> flo <span class="token keyword">in</span> train_labels <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">]</span> <span class="token punctuation">]</span> <span class="token punctuation">)</span> y_test <span class="token operator">=</span> np <span class="token punctuation">.</span> array <span class="token punctuation">(</span> <span class="token punctuation">[</span> <span class="token builtin">float</span> <span class="token punctuation">(</span> flo <span class="token punctuation">)</span> <span class="token keyword">for</span> flo <span class="token keyword">in</span> test_labels <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">]</span> <span class="token punctuation">]</span> <span class="token punctuation">)</span> |

Finally, reshape again to enter the network with the size of 224×224 and 1 anchor:

1 2 3 | X_train <span class="token operator">=</span> X_train <span class="token punctuation">.</span> reshape <span class="token punctuation">(</span> <span class="token punctuation">(</span> X_train <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">0</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> X_train <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">1</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> X_train <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">2</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> X_test <span class="token operator">=</span> X_test <span class="token punctuation">.</span> reshape <span class="token punctuation">(</span> <span class="token punctuation">(</span> X_test <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">0</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> X_test <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">1</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> X_test <span class="token punctuation">.</span> shape <span class="token punctuation">[</span> <span class="token number">2</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> |

Loss function

Here I will use RMSE to make losses because it is suitable for the problem or bluntly follow paper: v, you can read about RMSE here.

1 2 3 | <span class="token keyword">def</span> <span class="token function">root_mean_squared_error</span> <span class="token punctuation">(</span> y_true <span class="token punctuation">,</span> y_pred <span class="token punctuation">)</span> <span class="token punctuation">:</span> <span class="token keyword">return</span> K <span class="token punctuation">.</span> sqrt <span class="token punctuation">(</span> K <span class="token punctuation">.</span> mean <span class="token punctuation">(</span> K <span class="token punctuation">.</span> square <span class="token punctuation">(</span> y_pred <span class="token operator">-</span> y_true <span class="token punctuation">)</span> <span class="token punctuation">,</span> axis <span class="token operator">=</span> <span class="token operator">-</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> |

Build Model

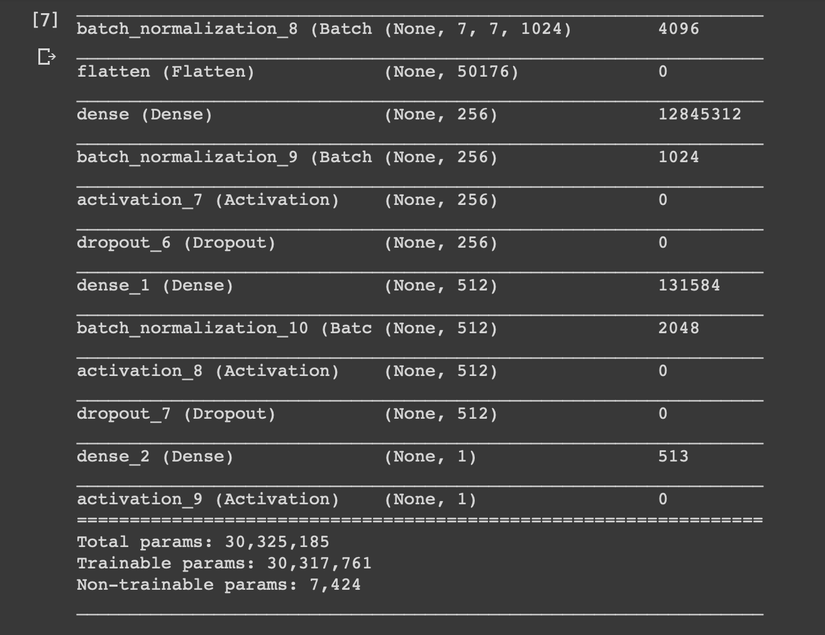

- Model with input image is 224×224 and 1 rub anchor

- 7 conv2D layers and and up to 1024 filters per layer

- 7 2D MaxPools

- 2 FC

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 | <span class="token keyword">class</span> <span class="token class-name">Beau</span> <span class="token punctuation">:</span> <span class="token keyword">def</span> <span class="token function">__init__</span> <span class="token punctuation">(</span> self <span class="token punctuation">)</span> <span class="token punctuation">:</span> self <span class="token punctuation">.</span> model <span class="token operator">=</span> Sequential <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token comment">#1st 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">64</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">3</span> <span class="token punctuation">,</span> <span class="token number">3</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">,</span> input_shape <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#1st 2dMaxPool Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.5</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#2nd 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">64</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">3</span> <span class="token punctuation">,</span> <span class="token number">3</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#2nd 2dMaxPool Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.25</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#3rd 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">128</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">5</span> <span class="token punctuation">,</span> <span class="token number">5</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#3rd 2dMaxPool Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.5</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#4th 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">128</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">5</span> <span class="token punctuation">,</span> <span class="token number">5</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#3rd 2dMaxPool Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.25</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#5th 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">256</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">3</span> <span class="token punctuation">,</span> <span class="token number">3</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#3rd 2dMaxPool Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">2</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.25</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#6th 2dConvolutional Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">512</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">5</span> <span class="token punctuation">,</span> <span class="token number">5</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> MaxPooling2D <span class="token punctuation">(</span> pool_size <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">1</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.5</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Conv2D <span class="token punctuation">(</span> <span class="token number">1024</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">5</span> <span class="token punctuation">,</span> <span class="token number">5</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> padding <span class="token operator">=</span> <span class="token string">'same'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Flatten <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#1st FC Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dense <span class="token punctuation">(</span> <span class="token number">256</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.25</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment">#2nd FC Layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dense <span class="token punctuation">(</span> <span class="token number">512</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> BatchNormalization <span class="token punctuation">(</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dropout <span class="token punctuation">(</span> <span class="token number">0.25</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment"># Output layer</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Dense <span class="token punctuation">(</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> self <span class="token punctuation">.</span> model <span class="token punctuation">.</span> add <span class="token punctuation">(</span> Activation <span class="token punctuation">(</span> <span class="token string">'relu'</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token comment"># self.model.summary()</span> |

The network I used is for a youngster’s (I put it at the end of the RF) but I adjusted it to my liking by adding a Conv2D layer, setting the dropout and resizing the image to 224×224, the original network of they are 7 million parameters, after adjusting it, it has increased to 30 million: v

Training

I also use sgd instead of adam because of its stability (at first I tried to use adam but its loss jumped too much), set EarlyStopping so that when val_loss doesn’t increase then more than 10 ep then stop by itself and set checkpoint to Save the most optimal model

1 2 3 4 5 6 7 8 9 10 11 12 | sgd <span class="token operator">=</span> SGD <span class="token punctuation">(</span> lr <span class="token operator">=</span> <span class="token number">0.0001</span> <span class="token punctuation">,</span> decay <span class="token operator">=</span> <span class="token number">1e</span> <span class="token operator">-</span> <span class="token number">6</span> <span class="token punctuation">,</span> momentum <span class="token operator">=</span> <span class="token number">0.9</span> <span class="token punctuation">,</span> nesterov <span class="token operator">=</span> <span class="token boolean">True</span> <span class="token punctuation">)</span> model <span class="token punctuation">.</span> <span class="token builtin">compile</span> <span class="token punctuation">(</span> optimizer <span class="token operator">=</span> sgd <span class="token punctuation">,</span> loss <span class="token operator">=</span> root_mean_squared_error <span class="token punctuation">,</span> metrics <span class="token operator">=</span> root_mean_squared_error <span class="token punctuation">)</span> earlyStopping <span class="token operator">=</span> EarlyStopping <span class="token punctuation">(</span> monitor <span class="token operator">=</span> <span class="token string">'val_loss'</span> <span class="token punctuation">,</span> patience <span class="token operator">=</span> <span class="token number">15</span> <span class="token punctuation">,</span> verbose <span class="token operator">=</span> <span class="token number">0</span> <span class="token punctuation">,</span> mode <span class="token operator">=</span> <span class="token string">'min'</span> <span class="token punctuation">)</span> filepath <span class="token operator">=</span> <span class="token string">"{epoch:02d}-{val_loss:.2f}.h5"</span> checkpoint <span class="token operator">=</span> ModelCheckpoint <span class="token punctuation">(</span> filepath <span class="token punctuation">,</span> monitor <span class="token operator">=</span> <span class="token string">'val_loss'</span> <span class="token punctuation">,</span> verbose <span class="token operator">=</span> <span class="token number">1</span> <span class="token punctuation">,</span> save_best_only <span class="token operator">=</span> <span class="token boolean">True</span> <span class="token punctuation">,</span> mode <span class="token operator">=</span> <span class="token string">'min'</span> <span class="token punctuation">)</span> history <span class="token operator">=</span> model <span class="token punctuation">.</span> fit <span class="token punctuation">(</span> X_train <span class="token punctuation">,</span> y_train <span class="token punctuation">,</span> callbacks <span class="token operator">=</span> <span class="token punctuation">[</span> earlyStopping <span class="token punctuation">,</span> checkpoint <span class="token punctuation">]</span> <span class="token punctuation">,</span> batch_size <span class="token operator">=</span> <span class="token number">128</span> <span class="token punctuation">,</span> epochs <span class="token operator">=</span> <span class="token number">100</span> <span class="token punctuation">,</span> verbose <span class="token operator">=</span> <span class="token number">1</span> <span class="token punctuation">,</span> validation_data <span class="token operator">=</span> <span class="token punctuation">(</span> X_test <span class="token punctuation">,</span> y_test <span class="token punctuation">)</span> <span class="token punctuation">)</span> |

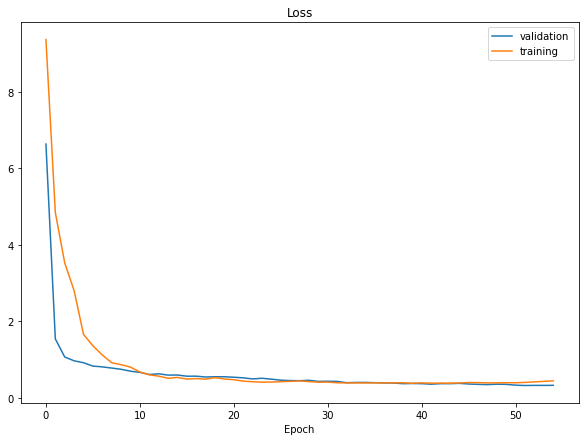

This is the result after my training, the loss is about 0.28 and the model is quite perfect: v Some of you may wonder why not augmentation with photos to give better train results, answer me tried it and it didn’t increase much  )

)

Result

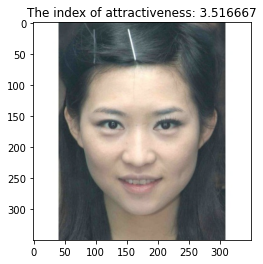

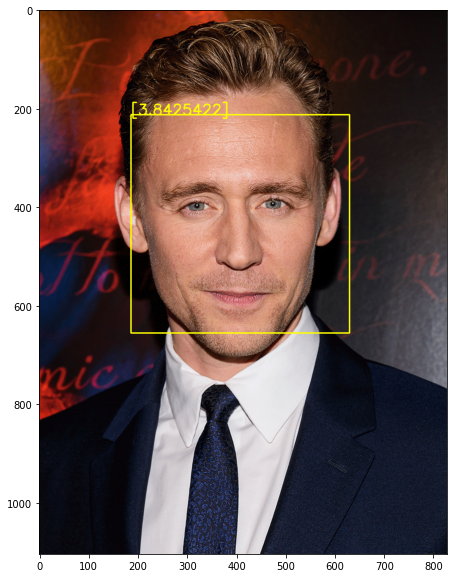

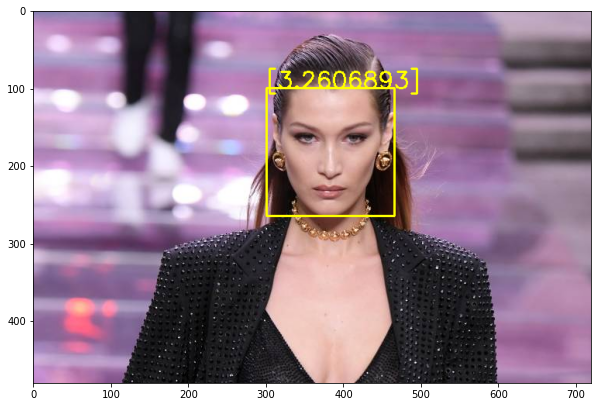

After having the model, we must build the code to load the model as well as the result show, I use opencv to detect the result because it is fast =)), Here the image will be calculated on a scale of 1-5 (1 is ugly / most damn 5 is prettiest / most ugly: v)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 | <span class="token keyword">import</span> imutils color <span class="token operator">=</span> <span class="token punctuation">[</span> <span class="token punctuation">(</span> <span class="token number">255</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">255</span> <span class="token punctuation">,</span> <span class="token number">255</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">255</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">100</span> <span class="token punctuation">,</span> <span class="token number">255</span> <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">0</span> <span class="token punctuation">,</span> <span class="token number">255</span> <span class="token punctuation">)</span> <span class="token punctuation">]</span> <span class="token punctuation">[</span> <span class="token punctuation">:</span> <span class="token punctuation">:</span> <span class="token operator">-</span> <span class="token number">1</span> <span class="token punctuation">]</span> imagepath <span class="token operator">=</span> <span class="token string">'anh can du doan'</span> face_clf <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> CascadeClassifier <span class="token punctuation">(</span> <span class="token string">'link den file haarcascade_frontalface_default.xml'</span> <span class="token punctuation">)</span> Model <span class="token operator">=</span> Beau <span class="token punctuation">(</span> <span class="token punctuation">)</span> Model <span class="token punctuation">.</span> model <span class="token punctuation">.</span> load_weights <span class="token punctuation">(</span> <span class="token string">'model.h5'</span> <span class="token punctuation">)</span> image_name <span class="token operator">=</span> imagepath <span class="token punctuation">.</span> split <span class="token punctuation">(</span> <span class="token string">'/'</span> <span class="token punctuation">)</span> <span class="token punctuation">[</span> <span class="token operator">-</span> <span class="token number">1</span> <span class="token punctuation">]</span> img <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> imread <span class="token punctuation">(</span> imagepath <span class="token punctuation">)</span> gray <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> cvtColor <span class="token punctuation">(</span> img <span class="token punctuation">,</span> cv2 <span class="token punctuation">.</span> COLOR_BGR2GRAY <span class="token punctuation">)</span> faces <span class="token operator">=</span> face_clf <span class="token punctuation">.</span> detectMultiScale <span class="token punctuation">(</span> gray <span class="token punctuation">,</span> <span class="token number">1.3</span> <span class="token punctuation">,</span> <span class="token number">5</span> <span class="token punctuation">)</span> <span class="token keyword">for</span> <span class="token punctuation">(</span> x <span class="token punctuation">,</span> y <span class="token punctuation">,</span> w <span class="token punctuation">,</span> h <span class="token punctuation">)</span> <span class="token keyword">in</span> faces <span class="token punctuation">:</span> fc <span class="token operator">=</span> gray <span class="token punctuation">[</span> y <span class="token punctuation">:</span> y <span class="token operator">+</span> h <span class="token punctuation">,</span> x <span class="token punctuation">:</span> x <span class="token operator">+</span> w <span class="token punctuation">]</span> roi <span class="token operator">=</span> cv2 <span class="token punctuation">.</span> resize <span class="token punctuation">(</span> fc <span class="token punctuation">,</span> <span class="token punctuation">(</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> roi <span class="token operator">=</span> roi <span class="token punctuation">.</span> reshape <span class="token punctuation">(</span> <span class="token punctuation">(</span> <span class="token number">1</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">224</span> <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> pred <span class="token operator">=</span> Model <span class="token punctuation">.</span> model <span class="token punctuation">.</span> predict <span class="token punctuation">(</span> roi <span class="token punctuation">)</span> <span class="token punctuation">[</span> <span class="token number">0</span> <span class="token punctuation">]</span> cv2 <span class="token punctuation">.</span> putText <span class="token punctuation">(</span> img <span class="token punctuation">,</span> <span class="token builtin">str</span> <span class="token punctuation">(</span> pred <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> x <span class="token punctuation">,</span> y <span class="token punctuation">)</span> <span class="token punctuation">,</span> cv2 <span class="token punctuation">.</span> FONT_HERSHEY_SIMPLEX <span class="token punctuation">,</span> <span class="token number">1</span> <span class="token punctuation">,</span> color <span class="token punctuation">[</span> math <span class="token punctuation">.</span> floor <span class="token punctuation">(</span> pred <span class="token punctuation">)</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> cv2 <span class="token punctuation">.</span> rectangle <span class="token punctuation">(</span> img <span class="token punctuation">,</span> <span class="token punctuation">(</span> x <span class="token punctuation">,</span> y <span class="token punctuation">)</span> <span class="token punctuation">,</span> <span class="token punctuation">(</span> x <span class="token operator">+</span> w <span class="token punctuation">,</span> y <span class="token operator">+</span> h <span class="token punctuation">)</span> <span class="token punctuation">,</span> color <span class="token punctuation">[</span> math <span class="token punctuation">.</span> floor <span class="token punctuation">(</span> pred <span class="token punctuation">)</span> <span class="token punctuation">]</span> <span class="token punctuation">,</span> <span class="token number">2</span> <span class="token punctuation">)</span> plt <span class="token punctuation">.</span> figure <span class="token punctuation">(</span> figsize <span class="token operator">=</span> <span class="token punctuation">(</span> <span class="token number">7</span> <span class="token punctuation">,</span> <span class="token number">7</span> <span class="token punctuation">)</span> <span class="token punctuation">)</span> plt <span class="token punctuation">.</span> imshow <span class="token punctuation">(</span> imutils <span class="token punctuation">.</span> opencv2matplotlib <span class="token punctuation">(</span> img <span class="token punctuation">)</span> <span class="token punctuation">)</span> <span class="token keyword">print</span> <span class="token punctuation">(</span> pred <span class="token punctuation">)</span> <span class="token comment"># cv2.imwrite('out'+image_name,img)</span> |

Some results obtained:

Princess Thuy Tung Tung Son: v

Tom Hiddleston with a pretty high score

This lady I bear, type model on gg finished out: v

You can go here to test it out: http://beauty.quangph.ml/

Link source code : Here

My article here is over, nothing is wrong and I do not expect you to comment below, and please upvote yourself for the best future between us, thank you very much, see you guys in the next article  )

)