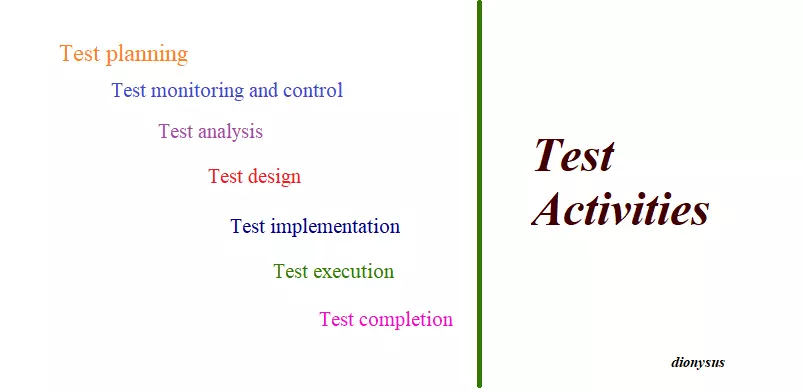

Test activities

Agile includes small iterations of software design, construction, and testing that occur on a continuous basis, supported by ongoing planning. Therefore, the test activities also take place on a repetitive and continuous basis in this development method.

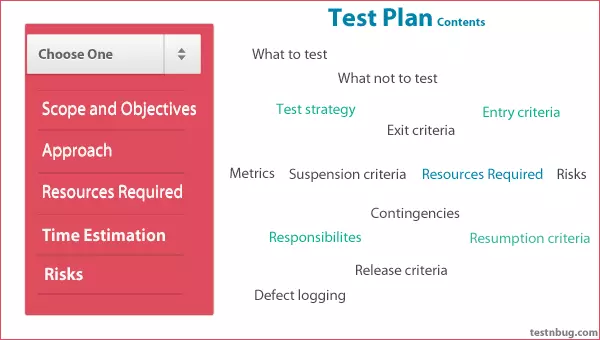

1. Test planning

Test planning

Task

- Define test objectives and approach to meet test objectives. br? For example, specify appropriate testing techniques and tasks and develop a test schedule to meet deadlines.

- May revise based on feedback from monitoring and control.

Work product

One or more Test plans include the following information:

- Information about the test basis that other test work products will relate through traceability information

- Exit criteria (or definition of completion) will be used during monitoring and control

2. Test monitoring and control

Task

- Relate to a comparison of the actual progress going on against the test plan using any test monitoring data identified in the test plan.

- Involves the implementation of activities necessary to meet the objectives of the Test plan.

- Supported by the evaluation of escape criteria to perform the test as part of the given test level, may include:

- Test test results and logs according to given test performance criteria

- Evaluate the quality of a part or system based on test results and logs

- Determine whether further testing is necessary.

- Provide test progress reports, including deviations from the plan and information to support any test stopping decision.

Work product

- Test progress report (given on an ongoing and / or regular basis)

- Test summary report (given at different completion milestones)

3. Test analysis

Task

- Test basis analysis matches the level of testing being considered:

- Specifications required

- Information design and construction

- The implementation of components or systems

- Risk analysis report

- Evaluate inspection facilities and test items to identify types of defect, such as:

- Equivoke

- Leave out

- Inconsistencies

- Incorrect

- Conflict

- Report redundancy

- Identify the features and feature sets that will be tested

- Identify and prioritize test conditions (functional, non-functional and structural characteristics, other business and technical factors and level of risk)

- Grasp two-way traceability between each element of the testing facility and the relevant test conditions

Work product

Identify and prioritize test conditions report test charts can be traced in two directions to report errors on test basis.

4. Test design

Task

- Design and prioritize cases and test suites

- Identify test data needed to support test conditions and test cases

- Design test environment and identify any necessary infrastructure and tools

- Capture two-way traceability between test basis, test conditions, test cases, and test procedure

Work product

- High-level test cases, there are no specific values for input data and expected results

- Traceable tracking of the test conditions it includes.

- Test data, design of the testing environment and identification of infrastructure and tools, although the extent to which these results are recorded vary significantly.

- The test conditions defined in the test analysis may be further improved in the test design

5. Test implementation

Task

- Develop and prioritize testing tests, and, potentially, create automated test scripts

- Create test suites from tests and (if any) test versions automatically Arrange test kits in the test execution schedule in a way that leads to effective testing

- Build a testing environment and verify that everything needed is set up correctly

- Prepare the test data and ensure it is loaded properly in the test environment

- Confirm and update two-way traceability between test basis, test conditions, test cases, test procedures and test sets

Work product

- Test procedures and their sequencing

- Test cases

- Test schedule

- Test data is used to assign specific values to the inputs and expected results of test cases

- The specific expected results associated with specific test data are determined using a test prophecy.

6. Test execution

Task

- Record the ID and version of the test item (s) or test object, test tool (s) and test software Perform tests manually or by using testing tools.

- Compare actual results with expected results

- Analyze anomalies to identify their likely causes

- Report bugs based on observed failures

- Log test results (e.g. pass, fail, blocked)

- Repeated testing activities are either the result of actions taken for anomalies or are part of planned testing.

- Verify and update two-way traceability between testing facilities, test conditions, test cases, test procedures and test results.

Work product

- Documentation of the status of individual test cases or test procedures (e.g., ready to run, pass, fail, blocked, intentionally skipped, etc.) Error Report

- Documentation of test item (s), test object, test tool and test software that participated in the test

7. Test completion

Task

- Check to see if all error reports are closed, enter change requests or product backlog items for any unresolved errors at the end of the test.

- Create a test summary report to be communicated to stakeholders

- Complete and store test environment, test data, infrastructure testing and other test software for reuse later

- Handover of test software to maintenance groups, projects other teams and / or other stakeholders can benefit from its use

- Analyze lessons learned from completed testing activities to identify changes needed for future iterations, releases, and projects

- Use the information gathered to improve the maturity of the testing process

Work product

- Report the inspection summary

- Action items to improve on subsequent projects or iterations

- Request changes or product backlog items

- Test completed.