Every day my baby wants to be able to read Ehon to him

My daughter is about 2 years old, and loves to read Ehon stories. Before he goes to bed, he chooses a book every night and brings it to me to read. Because of work, there are days when I have to go on business trips, two or three children cannot meet each other. If during the days I was away, he could still hear his father’s voice read the story, then the next day, maybe he would still choose me (instead of mom, or grandma … etc) and bring Ehon stories to ask me to read. continued. That’s why, I made a Web Application, so-called “Dad Tell Me”. This App features: Using machine learning to judge the Ehon book that my daughter likes, then turn on the content of the book by reading my voice.

Video

https://twitter.com/i/status/1325425425196015616

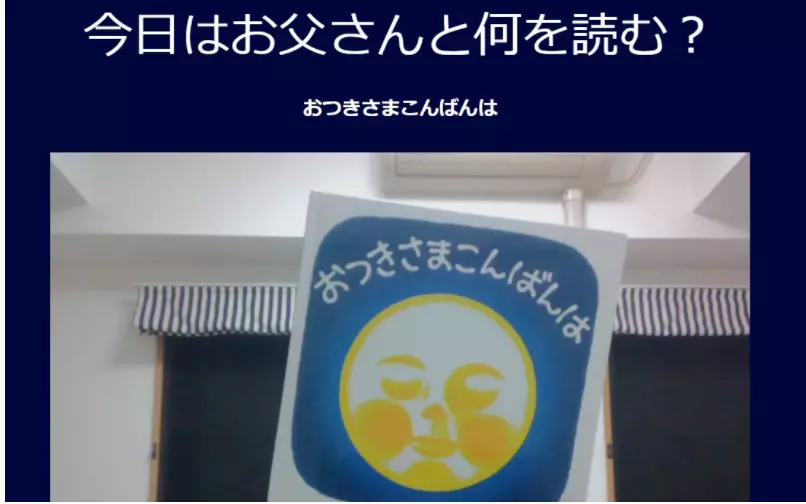

The purpose of this app is for my daughter to use. So I kept the app’s screen as simple as possible. As long as she held up Ehon on the camera, my voice reading the story would be turned on immediately. Below is the actual screen in use.

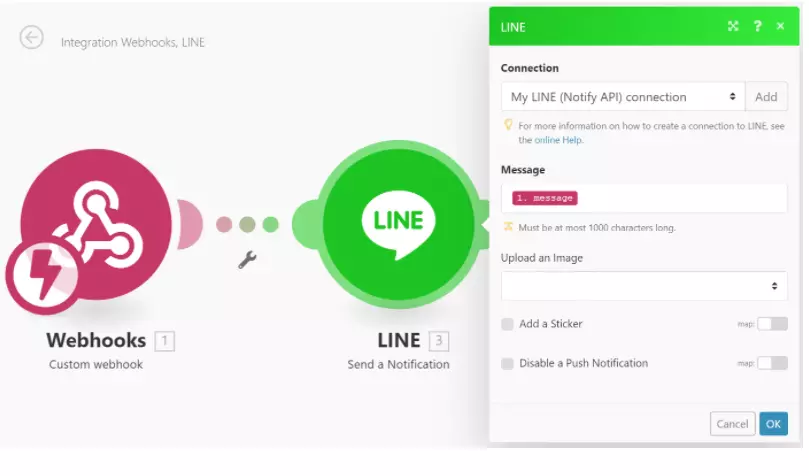

In addition, for the “when did your daughter use the app” information, I added the “notification” function with LINE Notify. Hehe, this is a function I reward myself with. When I get home on the train, I will both smile and check: “Ah, today my daughter has heard these stories!”, Enjoying the little joy of being a father for the first time.

The components that make up this app

1.Use the Teachable Machine. Give machine learning Ehon books that my daughter likes. Then release the link used to embed the Model.

2. Use Model learning, mI5 to load. This runs on Frontend.

1 2 3 | sample.html <script src="https://unpkg.com/ <a class="__cf_email__" href="/cdn-cgi/l/email-protection">[email protected]</a> /dist/ml5.min.js"></script> |

1 2 3 4 5 6 7 8 9 10 | sample.js // URL của Model đã tạo const imageModelURL = 'https://teachablemachine.withgoogle.com/models/XXXXX/'; // Load model tự tạo classifier = ml5.imageClassifier(imageModelURL + 'model.json', video, () => { // Hoàn thành Load console.log('Model Loaded!'); }); |

3.Implement so that: from the judgment result of the learning model, the audio file will be turned on.

1 2 3 4 5 | sample.html <audio id="sound-file1" preload="auto"> <source src="https://dotup.org/uploda/dotup.org2302152.mp3" type="audio/mp3" controls> </audio> |

1 2 3 4 5 6 7 8 | sample.js function storytelling1(){ //Tạo file âm thanh document.getElementById('sound-file1').play(); //Access vào Webhook sendWebhook(' Đọc truyện「Do you want a Hug?」'); } |

Using axios, you get access to Integromat’s WebhookURL when the sound source is played.

1 2 3 | sample.html <script src="https://unpkg.com/axios/dist/axios.min.js"></script> |

1 2 3 4 5 6 7 8 9 10 11 12 13 | sample.js // Thêm message muốn gửi vào đối số argument async function sendWebhook(message) { // Gửi lên Integromat try { // WebhookURL của Integromat đã lấy được const res = await axios.get(`https://hook.integromat.com/XXXXXXXXXXXXXXXXXXXXXXXXXX?message=${message}`); console.log(res.data); } catch (err) { console.error(err); } } |

5.Use Integromat, link LINE Notification with WebhookURL, the message 「読 み 聞 か せ」 / Dad tells me it’s been run (you used the app).

Source code

storytelling.html

<! DOCTYPE html>

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 | <html> <head> <meta charset="UTF-8" /> <meta name="viewport" content="width=device-width, initial-scale=1.0" /> <title>Storytelling</title> </head> <body> <h1>Storytelling</h1> <div id="console_log"></div> <video id="myvideo" width="640" height="480" muted autoplay playsinline></video> <audio id="sound-file1" preload="auto"> <source src="https://dotup.org/uploda/dotup.org2302152.mp3" type="audio/mp3" controls> </audio> <audio id="sound-file2" preload="auto"> <source src="https://dotup.org/uploda/dotup.org2302153.mp3" type="audio/mp3" controls> </audio> <audio id="sound-file3" preload="auto"> <source src="https://dotup.org/uploda/dotup.org2302151.mp3" type="audio/mp3" controls> </audio> <script src="https://unpkg.com/ <a class="__cf_email__" href="/cdn-cgi/l/email-protection">[email protected]</a> /dist/ml5.min.js"></script> <script src="https://unpkg.com/axios/dist/axios.min.js"></script> <script> // URL của model đã tạo const imageModelURL = 'https://teachablemachine.withgoogle.com/models/XXXXXXX/'; console.log = function (log) { document.getElementById('console_log').innerHTML = log; } async function main() { // Get ảnh từ Camera const stream = await navigator.mediaDevices.getUserMedia({ audio: false, video: true, }); // Get DOM "myvideo" tương ứng với ID const video = document.getElementById('myvideo'); // Set ảnh camera vào video video.srcObject = stream; // Load model tự tạo classifier = ml5.imageClassifier(imageModelURL + 'model.json', video, () => { // Hoàn tất việc load console.log('Model Loaded!'); }); // Thực hiện liên tiếp việc xử lý phân loại function onDetect(err, results) { if (results[0]) { console.log(results[0].label); //Phát giọng đọc if (results[0].label === 'Do you want a Hug?') { // Run tham số storytelling storytelling1(); } if (results[0].label === 'おつきさまこんばんは/Xin chào ông trăng') { storytelling2(); } if (results[0].label === 'だるまさんと/Lật đật Daruma') { storytelling3(); } } classifier.classify(onDetect); } classifier.classify(onDetect); } // Thêm message muốn gửi vào đối số async function sendWebhook(message) { // Gửi vào Integromat try { // WebhookURL của Integromat đã lấy về const res = await axios.get(`https://hook.integromat.com/XXXXXXXXXXXXXXXXXXXXXXXXXX?message=${message}`); console.log(res.data); } catch (err) { console.error(err); } } function storytelling1(){ //Bật file âm thanh document.getElementById('sound-file1').play(); sendWebhook('Đọc truyện「Do you want a Hug?」'); } function storytelling2(){ //Bật file âm thanh document.getElementById('sound-file2').play(); sendWebhook('Đọc「おつきさまこんばんは」/xin chào ông trăng'); } function storytelling3(){ //Bật file âm thanh document.getElementById('sound-file3').play(); sendWebhook('Đọc「だるまさんと」/Lật đật daruma'); } // Run main(); </script> </body> </html> |

Feel when using machine learning to make apps

When I did my own research and dabbled with keyword Machine learning, I thought that there would be a lot of things to learn. However, using tools like TeachableMachine, etc., I was able to create a learning model easily. Also, I personally feel that using the model from libraries like the mI5 is not too difficult either.

Perhaps each model type will have certain limitations. However, I still want to use it while learning more.

P / S

・ I have also thought of an option: Take pictures of the pages in the album, insert my voice, and play the video. However, my daughter often reads Ehon before going to bed. If your baby keeps looking at the glowing screen, he won’t fall asleep. So, I have chosen the option: Play the audio file (for pictures, the child still needs to open the ehon book).・ For me, reading ehon to my child is in TOP3 of the things I want to do when I’m with my child. I will try to think more ideas, so that I can give my kids a better time and have more fun.

Link of the original article: https://sal.vn/SWZi5X