Just watching a 2D video, Facebook’s new AI can turn it into 3D images

- Tram Ho

” Hit me ,” Morpheus said. “ If you can. ” Neo loaded a program of martial arts and launched a series of moves at his teacher. Morpheus intercepted all attempts to attack without losing any effort. This scene is a training taken from the 1999 film Matrix, a film that stunned many people at the time by combining a storyline focused on artificial intelligence (AI) with advanced computer graphics. .

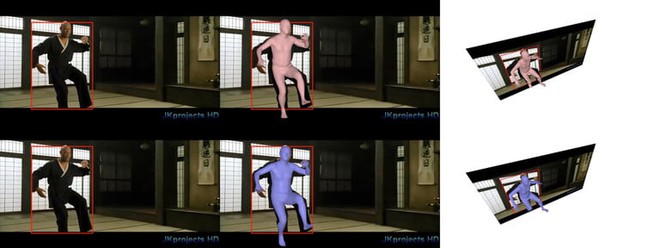

More than 20 years later, the scene was used by Facebook as a demo of their groundbreaking AI image recognition technology. On the screen, this scene is still happening as usual. Nearly normal is more correct. While Morpheus and the background remained unchanged, the 2D footage featuring Keanu Reeve was transformed into a 3D model. Although the demo was only sketchy, Andrea Vedaldi, one of Facebook’s AI experts (specifically computer vision and machine learning), said that the 3D scene transformed from 2D can be rendering in real time.

That means Facebook’s AI algorithms can watch a regular video, and while the video is playing, AI can figure out a way to turn it into a complete 3D scene, frame by frame. The demo from the film Matrix can be seen as a particularly impressive example of the algorithm, as the kung-fu moves are extremely difficult to handle even for humans, let alone one. the machine can only extrapolate. The result is not perfect, but quite good!

” This is a very, very challenging video because it shows you martial arts stances,” Vedaldi said. ” This is not something you would normally see in a user application. It was made for fun, just to demonstrate the performance of the system. ”

Before a simpler task – for example, turning a video of your kid playing soccer into wireframe models or doing the same thing with a still photo while traveling – Facebook’s algorithm becomes old practice much more. And it gets better over time as well.

Extract data from images

The fact that Facebook focuses on such algorithms seems quite strange. Should they have improved the news feed algorithm? Or find new ways to suggest brands or content that you might be interested in interacting with? But why turn 2D images into 3D? Obviously this is not the kind of research you would think a social media giant would invest in. But that’s true – even with no plans to turn this research into a feature of Facebook’s user interface.

In fact, for the past 7 years, Facebook has been one of the leading names in the field of artificial intelligence. In 2013, Yann LeCun, one of the leading experts in deep learning in the world, joined Facebook to study AI on a scale that is virtually unattainable in 99% of global AI labs. Since then, Facebook has expanded its AI division – called FAIR (Facebook AI Research) – to the world. Today, the company has 300 full-time engineers and scientists who are actively working with the goal of bringing out the fascinating artificial intelligence technologies of the future. FAIR’s offices are located in Seattle, Pittsburgh, Montreal, Boston, London, and Tel Aviv, Israel – and are staffed with leading researchers in the field.

Finding ways to better understand the content of your photos is a big focus for Facebook. Since 2017, Facebook has used neural networks to automatically tag usernames in photos, even when they are not tagged by others. Since then, the social media giant’s image recognition technology has become much more sophisticated.

Ironically, one of the most recent situations where this technology comes to life is when Facebook crashes. In July 2019, a temporary blackout caused many photos not to be displayed on Facebook. In their place are photo frames, accompanied by machine learning tags that depict the AI’s thinking about what’s in the image. Some tags say: “The photo may contain: trees, sky, outdoors, nature, cat, person standing “. Just like the movie Matrix, the final scene in season 1, when Neo reaches a new realm, can see the world in continuous flow of code!

Facebook has now taken a step further. According to a slide accompanying the Facebook Matrix demo aforementioned: ” We want to understand everything in 3D, the first time we see it. ” Of course it’s not just human awareness, but ” We really want to. AI can understand the world like we do “- Vedaldi said.

That means Facebook wants when it gives their AI a photo of an airplane, it will be able to recognize it’s an airplane, understand its shape in 3D, and predict it will move. how. The same goes for a chair. A bird. A car. Or someone who is doing yoga.

Will the technology be carried over to an application you often use?

No. This demo won’t be present as a Facebook feature in the foreseeable future, but coaching AI to understand the world through images it clearly sees is something Facebook is doing in their overall business model. Since its inception, more than 250 billion photos have been posted to the platform, or approximately 350 million photos per day. Facebook also owns Instagram, with approximately 40 billion photos and videos posted since its inception, and 95 million photos posted every day.

As one of the main ways for people to communicate with each other on the internet, understanding what is happening in the pictures will bring immense value – in many ways – to the Faecbook’s mission. Understanding and interacting with images in 3D will also allow Facebook to develop stronger new technologies like AR. Imagine an AR application that turns your 2D Facebook photos years ago into 3D images, and allows you to rediscover the scene in AR. Would Facebook create such a thing? Not sure, but the technology to make that happen – and so much more – is already there.

“Our research direction is quite consistent with our company priorities, ” said Natalia Neverova, head of research at FAIR Paris. ” We expect at least a large amount of research to be applied to products. But I cannot say specific times or applications. ”

Reference: DigitalTrends

Source : Genk