Information storage and retrieval are two of the most important tasks for any web application. They affect the success of the project. A web app has to store billions of different data records, making it difficult to store and search. And elasticsearch was born to solve this problem. In this article, I will guide you through the process of integrating a simple Ruby on Rails app with Elasticsearch.

Defining the terms

Before embarking on coding a Ruby on Rails web and implementing the search algorithm, we have gone through the main terms and installed the necessary services.

Elaticsearch is a search service based on the open source JSON-based search service. It allows storing, scanning and analyzing necessary data in a millisecond. This service includes the integration of complex search terms and conditions. That’s why Elaticsearch is popular, such as NASA, Microsoft, eBay, Uber, GitHub, Facebook, Warner Brothers, etc.

Let’s take a look at some of Elasticsearch’s key terms:

Mapping is a process of determining how documents and fields are stored and indexed.

Indexing is an action to retain data in elasticsearch, each cluster can contain different indexes that each index can contain many types. Each type is almost like a table in the database and has a list of fields assigned to the document of that type.

Cluster is a collection of nodes – where to store all data, perform indexing and searching between nodes.

Each node server node within the cluster 1, which stores data, participate in the evaluation of the cluster index, and perform a search.

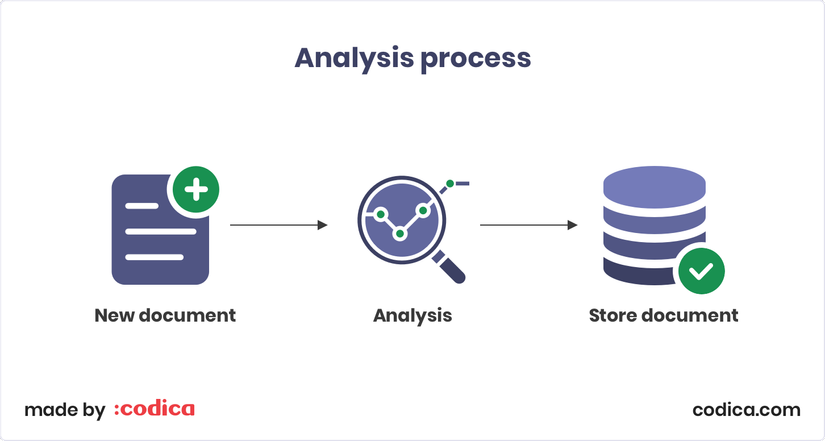

Analysis process is a process of displaying text into a token or term to search.

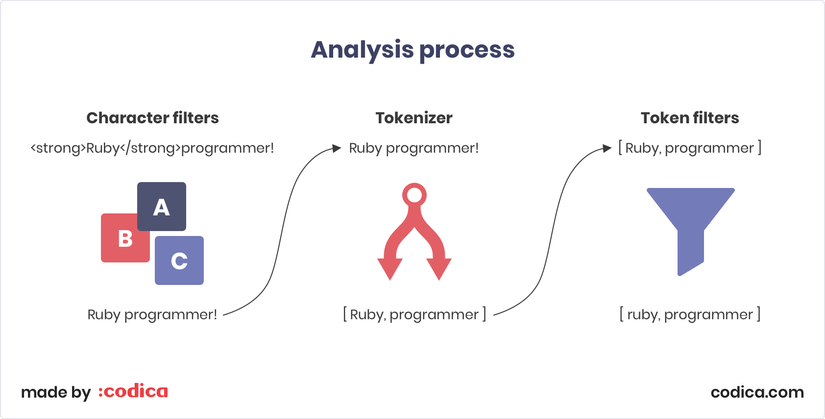

Analyzer an analyzer includes: character filters, tokenizer, and token filters.

Character filters First, it passes through one or several character filters . It takes the original text fields and then converts the value by adding, deleting or modifying characters. For example, it can remove html markup from text.

Tokenizer They are then separated into tokens that will usually be words.

Token filters are almost like character filters . The main difference is that token filters work with token streams, while character filters work with character streams.

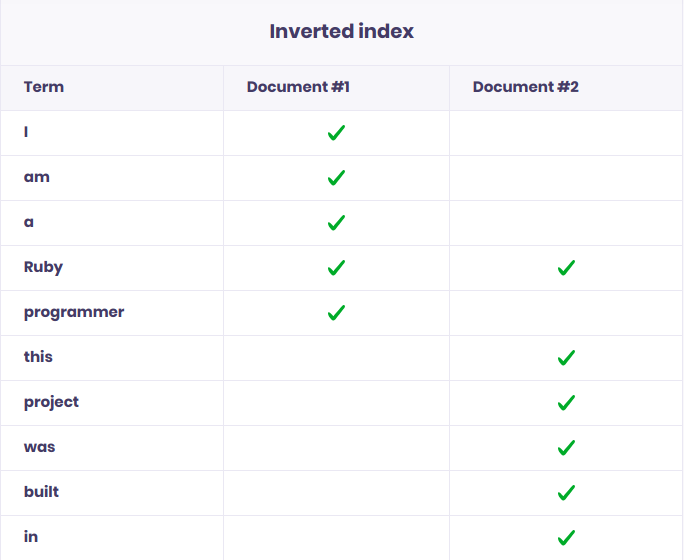

Inverted index The purpose of an inverted index is to store the structure of a document that allows searching the entire document as quickly and efficiently as possible.

Let’s see the following example: I have two sentences as follows: “I am a Ruby programmer” and “This project was built in Ruby”. So in the inverted index , they will be saved as follows:

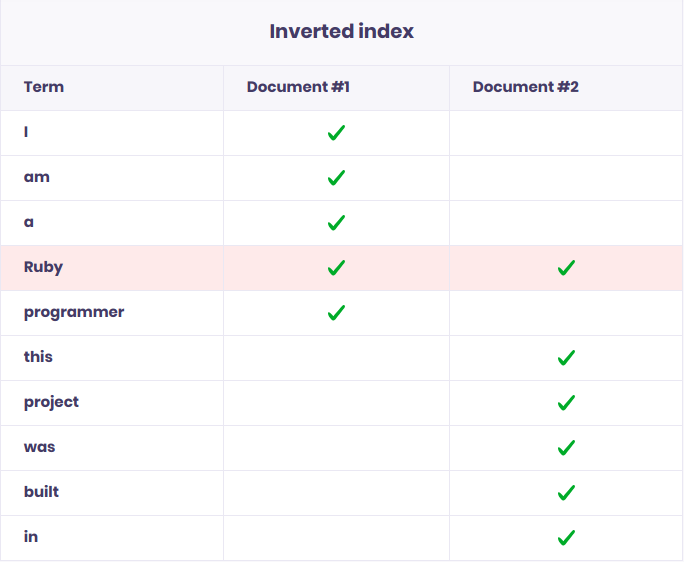

If we search with the keyword “Ruby” we will see that the keyword is found in both sentences:

Step # 1: Installing the tools

Before getting started with coding, we need to set up the environment and service.

Install Ruby

Install Rails

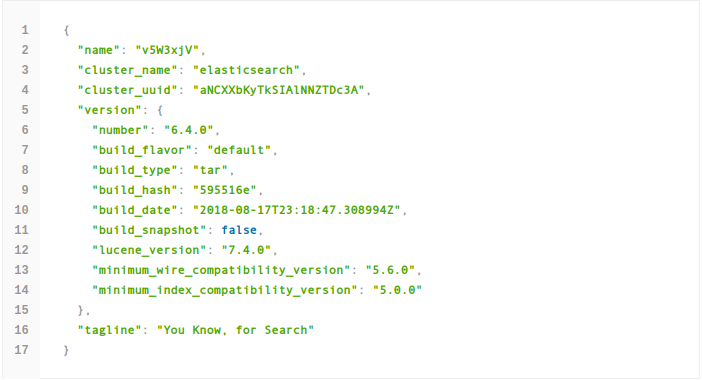

Install Elasticsearch 6.4.0 To ensure that elasticsearch has been successfully installed. Please visit http: // localhost: 9200 /

We can see a few configurations of elasticsearch:

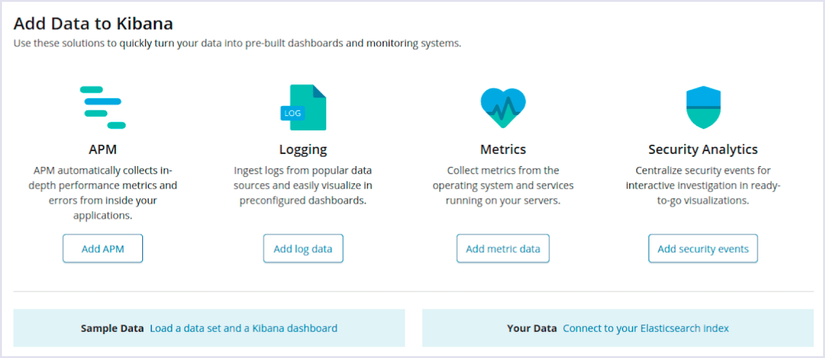

Install Kibana 6.4.2

This is the user interface for web users. Kibana will use Elashtichsearch to search for data that matches the user’s request.

To make sure kibana is installed and running. Please visit http: // localhost: 5601 /

Step # 2: Initiating a new Rails app

In this application, we will use PostgreSQL for Rails API:

1 2 3 4 5 | rvm use 2.6.1 rails new elasticsearch_rails --api -T -d postgresql cd elasticsearch_rails bundle install |

Config database in config/database.yml :

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 | default: &default adapter: postgresql encoding: unicode pool: <%= ENV.fetch("RAILS_MAX_THREADS") { 5 } %> username: postgres development: <<: *default database: elasticsearch_rails_development test: <<: *default database: elasticsearch_rails_test production: <<: *default database: elasticsearch_rails_production username: elasticsearch_rails password: <%= ENV['DB_PASSWORD'] %> |

Then run db:create . We will create Model Location with 2 fields: name and level.

1 2 | rails generate model location name level |

Next we need to fake the initial data to test your application in the file db/seeds.rb . The data has been prepared here Don’t forget db:seed to import data into the database!

Step # 3: Using Elasticsearch with Rails

To integrate elasticsearch into the application we need to add the following 2 gems to Gemfile:

1 2 3 | gem 'elasticsearch-model' gem 'elasticsearch-rails' |

Do not forget to bundle install to install! Now we are ready to add methods and functions to the Location model. We create the searchable.rb file in app/models/concerns with the following content:

1 2 3 4 5 6 7 8 9 | module Searchable extend ActiveSupport::Concern included do include Elasticsearch::Model include Elasticsearch::Model::Callbacks end end |

We include the Searchable module in the Location class

1 2 3 4 | class Location < ApplicationRecord include Searchable end |

As you can see in searchable.rb we have two modules: Elasticsearch::Model and Elasticsearch::Model::Callbacks .

– With Elasticsearch::Model module, we add Elaticsearch integration into the model.

– With Elasticsearch::Model::Callbacks Every time an object is saved, updated or deleted, the indexed data is updated accordingly.

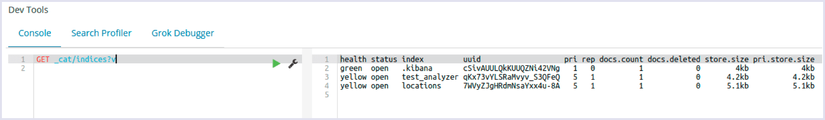

The next thing we need to do is to index the Location model. Open the rails console with the rails c command and execute the Location.import force: true command. To test we use kibana, visit http: // localhost: 5601 / in the browser and insert GET _cat/indices?v

As you can see we have created the index with the location name

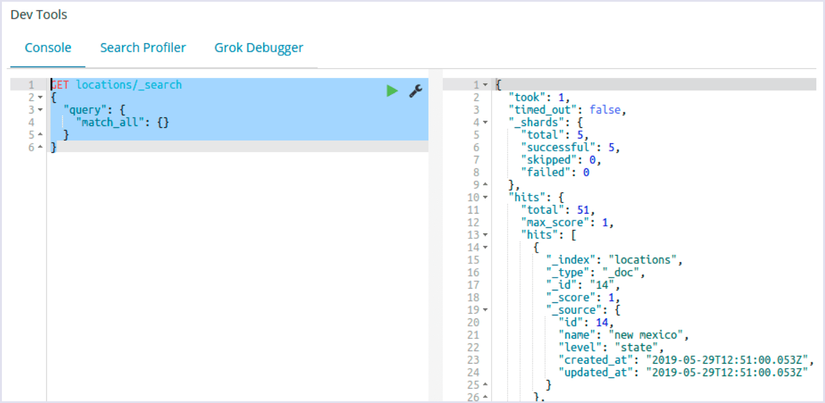

Now we can test our queries with the initial test data. You can also refer to the Elaticsearch Query DSL commands here [here] ( https://www.elastic.co/guide/en/elasticsearch/reference/current/query-dsl.html )

Continue to insert the following code:

1 2 3 4 5 6 7 | GET locations/_search { "query": { "match_all": {} } } |

The hits attribute is returned and as you can see, all the fields in the Location model are indexed.

Step # 4: Building a custom index with autocomplete functionality

Before creating a new index we need to delete the previous index. Open the rails console with the rails c command and execute the Location.__elasticsearch__.delete_index! command Location.__elasticsearch__.delete_index! , the previous index will be removed.

Next we need to change app/models/concerns/searchable.rb :

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 | module Searchable extend ActiveSupport::Concern included do include Elasticsearch::Model include Elasticsearch::Model::Callbacks def as_indexed_json(_options = {}) as_json(only: %i[name level]) end settings settings_attributes do mappings dynamic: false do # we use our autocomplete custom analyzer that we have defined above indexes :name, type: :text, analyzer: :autocomplete indexes :level, type: :keyword end end def settings_attributes { index: { analysis: { analyzer: { # we define custom analyzer with name autocomplete autocomplete: { # type should be custom for custom analyzers type: :custom, # we use standard tokenizer tokenizer: :standard, # we apply two token filters # autocomplete filter is a custom filter that we defined above filter: %i[lowercase autocomplete] } }, filter: { # we define custom token filter with name autocomplete autocomplete: { type: :edge_ngram, min_gram: 2, max_gram: 25 } } } } } end end end |

In the above code we have serialized the model’s properties to JSON in the as_indexed_json method. We will retrieve two fields: name and level .

1 2 3 4 | def as_indexed_json(_options = {}) as_json(only: %i[name level]) end |

More importantly, we also define how to configure the index.

Open the rails console and check if the request is working properly:

1 2 3 | results = Location.search('san francisco', {}) results.map(&:name) # => ["san francisco", "american samoa"] |

We should also check the exceptions that have been set to see if they work correctly!

1 2 3 | results = Location.search('Asan francus', {}) results.map(&:name) # => ["san francisco"] |

Step # 5: Making the search request available by API

Going forward, we will create the HomeController to execute the query.

1 2 | rails generate controller Home search |

Add code to HomeController :

app/controllers/home_controller.rb :

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | class HomeController < ApplicationController def search results = Location.search(search_params[:q], search_params) locations = results.map do |r| r.merge(r.delete('_source')).merge('id': r.delete('_id')) end render json: { locations: locations }, status: :ok end private def search_params params.permit(:q, :level) end end |

Finally, rails s to check the results. Go to http: // localhost: 3000 // home / search? Q = new & level = state

The returned API result will include the location whose name contains new and the state level .

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 | { "locations": [ { "_index": "locations", "_type": "_doc", "_id": "41", "_score": 3.676841, "name": "new york", "level": "state", "id": "41" }, { "_index": "locations", "_type": "_doc", "_id": "17", "_score": 3.5186555, "name": "new jersey", "level": "state", "id": "17" }, { "_index": "locations", "_type": "_doc", "_id": "10", "_score": 2.7157228, "name": "new hampshire", "level": "state", "id": "10" } ] } |

So we have a rails application integrated search function of elasticsearch.

Advantages and disadvantages

Advantages:

- Speed: using elasticsearch returns values very quickly, because just looking for a term returns the values associated with that term.

- Building on Lucene: Because it is built on Lucene, Elasticesearch provides the most powerful full-text search.

- Text-oriented: It stores complex entities in JSON format and indexes all fields in the default way, thus achieving higher performance.

- Free schema: It stores large amounts of data as JSON in a distributed manner. It also tries to detect the structure of the data and index the current data, making it search-friendly.

Defect:

- Elasticsearch is designed for search purposes, so for tasks other than search like CRUD (Create Read Update Destroy), elasticsearch is inferior to other databases like Mongodb, Mysql…. Therefore, people rarely use elasticsearch as the main database, but often combine it with another database.

- In elasticsearch, there is no concept of database transaction, that is, it will not guarantee data integrity in Insert, Update, Delete operations. My logic is wrong or leads to data loss. This is also one part that makes elasticsearch should not be the main database.

- Not suitable for systems that regularly update data. It will be very expensive to index the data.

These are my findings on elasticsearch and the integration of elasticsearch into a simple rails application.

Hopefully the above knowledge will help you in building your own application

References: