The two concepts that I am about to introduce below seem quite familiar to many developers. Java code itself should be quite familiar with multithreading (parallelism). But later, a few other languages emerged as a phenomenon, such as Go. It handles multitasking in a different concurrency that I feel worth learning, it gives me more options when solving performance-demanding problems in multi-tasking. The following article I will present the difference between two concepts Concurrency and Paramllelism.

1. What is concurrency?

Concurrency processing means being able to handle multiple jobs at the same time, and those jobs don’t have to happen at the same time. It is clearly explained through the following example:

Một cậu bạn đang chạy bộ buổi sáng. Trong quá trình chạy bộ anh ta phát hiện mình đã quên chưa thắt dây giày, lúc này anh ta sẽ phải dừng công việc chạy bộ lại và bắt đầu công việc thắt lại dây rồi mới có thể tiếp tục chạy được.

This is a basic example of concurrency. The guy above can handle both running and lacing simultaneously, meaning he can handle multiple tasks at once.

2. What is Parallelism and how is it different from Concurency?

Parallelism means being able to handle multiple jobs at the same time. Sounds a bit like concurrency, but it’s not. Let’s go back to the running buddy example. Let’s say he’s running while listening to music. In this case, he can do 2 jobs running and listening to music at the same time. That is, these two actions occur in parallel.

3. Concurrency and Parallelism are based on a technical point of view

Now that you understand how parallelism and concurrency happen through real-world examples, we will now present it from a technical point of view.

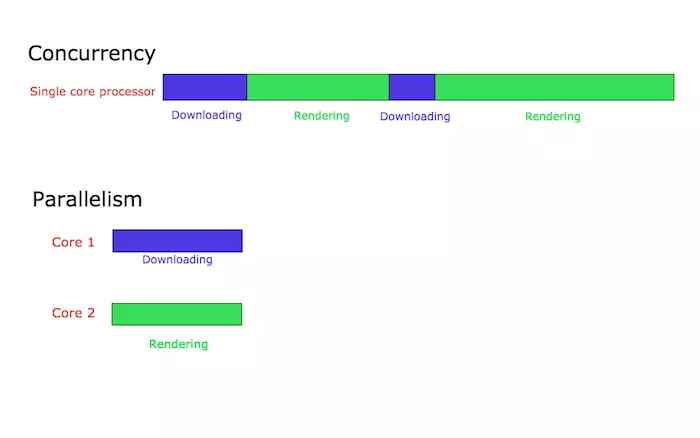

Let’s say you are developing a web browser. A web browser has many different components. Two of them are rendering web content and downloading files from the internet. Suppose we organize the code of each function to be executed independently. If you run this browser on a single core processor. The processor can switch context between file downloads and web content rendering. This case is understood as the processor is processing concurrent (concurrency). The essence is that concurrent processes start at different times and their execution cycles overlap. But because the transition is happening so quickly, we hardly realize there is a delay here and it feels like 2 jobs are happening at the same time.

Next, suppose the browser runs on a multi-core processor. In this case 2 jobs for downloading files and rendering web content can take place on 2 different cores. Here we call parallel processing.

Parallel processing does not always result in faster execution. This is because the components running in parallel will probably have to communicate with each other. For example, in the case of the above browser, after the file download completes, a completion message will be displayed to the user. This communication occurs between the download handler and the renderer that is displayed on the web screen. The cost of this resource is lower than in concurrency processing systems. Whereas if these components run in parallel with multiple cores, this communication will consume a greater amount of resources.