Hi everybody,

We meet each other once in a while, to continue the series to share knowledge about tech, today I will learn and share about a hot language in the IT community that is Golang .

As everyone knows Golang is a language designed and developed by Google. It is expected to help the software industry exploit the multi-core platform of the processor and multitask better. Therefore, it has extremely fast processing speed and is preferred in large projects that require high processing speed in the 4.0 technology trend.

Today I have made a small example of bing’s clone data to see how Golang works and how difficult it is, invite everyone to read and practice.

I. Setup golang

1.1 Download golang

Open up a terminal and download golang with the following command:

1 2 | tar -C /usr/local -xzf go1.15.7.linux-amd64.tar.gz |

This command will download the golang installation file as tar.gz and install it.

1.2. Add PATH

Adding the PATH variable for golang is so that when we type the go command, golang will automatically run golang Open .bashrc file with the command:

1 2 | nano ~/.bashrc |

and add to the bottom of the PATH export command file:

1 2 | export PATH=$PATH:/usr/local/go/bin |

Then run the command below to reload bashrc

1 2 | source ~/.bashrc |

Thus my PATH variable was successfully added.

1.3 Inspection

To check if the installation is successful or not? And what version is golang using? You run the following command:

1 2 | go version |

After running the test, you should see your version

1 2 | go version go1.15.7 linux/amd64 |

So, the first step is to install golang , then I will initialize the project and install the package

You can visit golang ‘s golang to see details on how to install: https://golang.org/doc/install

II. Install package

Create your project directory anywhere and run the following commands to install the package:

1 2 | go get -u github.com/gocolly/colly |

1 2 | go get -u github.com/go-sql-driver/mysql |

In the scope of this project, I install 2 packages: gocolly/colly , go-sql-driver/mysql

colly: you can easily extract structured data from web pages, which can be used for a variety of applications, like data mining, data processing or storage. Link githubmysql: The mysql driver for convenient and easy DB manipulation. This github 2 package link is quite hot and is used a lot ingolangbecause it meets the needs of the user and is easy to use.

After the installation is complete, in your project directory will create a file go.mod . This file is similar to PHP’s composer or nodejs package.json. It is used to store package names, version used in the project.

Ok, finished setting, install package, I will start crawling data from bing.

III. Starting craw

3.1 Objectives

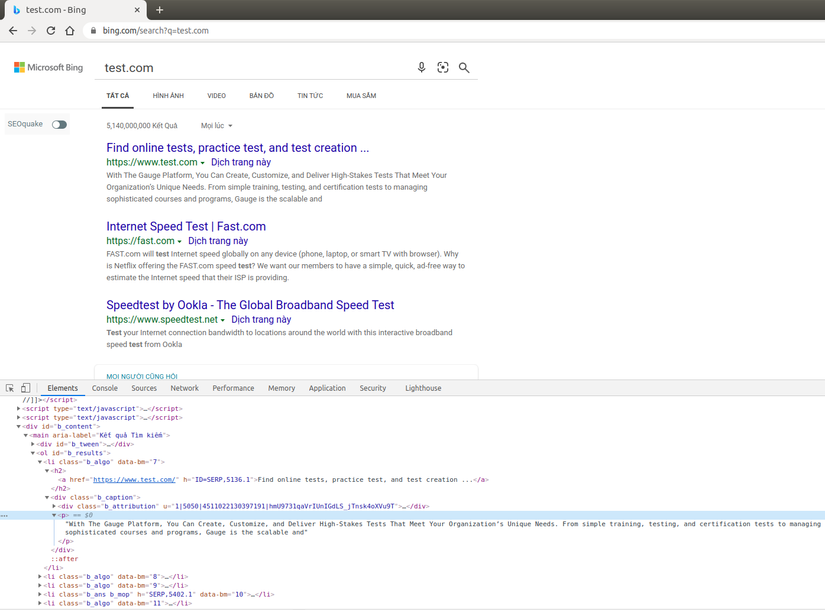

Scan data from Bing url: https://www.bing.com/search?q=test.com and save title , link , description in the database.

3.2 Craw bing data

Because golang has a working mechanism that will start from main() function in a main run file, I will create main.go file to be the main run file for project and main() function.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 | package main import ( "fmt" //Thư viện để print ra màn hình "log" //Thư viện để log "github.com/gocolly/colly" //Import thư viện colly craw data ) type Data struct { //Khởi tạo struct Data chứa dữ liệu craw title string link string description string } func main() { crawl() } func crawl() { c := colly.NewCollector() c.OnRequest(func(r *colly.Request) { //Đang gửi request get HTML fmt.Printf("Visiting: %sn", r.URL) }) c.OnError(func(_ *colly.Response, err error) { //Handle error trong quá trình craw html log.Println("Something went wrong:", err) }) c.OnResponse(func(r *colly.Response) { //Sau khi đã lấy được HTML fmt.Printf("Visited: %sn", r.Request.URL) }) c.OnHTML(".b_algo", func(e *colly.HTMLElement) { //Bóc tách dữ liệu từ HTML lấy được data := Data{} data.title = e.ChildText("h2") //Tìm đến thẻ con h2 và lấy nội dung data.link = e.ChildText(".b_caption cite") //tìm đến thẻ con cite và lấy nội dung data.description = e.ChildText(".b_caption p") //Tìm đến thẻ con p và lấy nội dung fmt.Printf("- Title: %sn- Link: %sn- Description: %sn", data.title, data.link, data.description) //In ra màn hình giá trị đã lấy được }) c.OnScraped(func(r *colly.Response) { //Hoàn thành job craw fmt.Println("Finished", r.Request.URL) }) c.Visit("https://www.bing.com/search?q=test.com") //Trình thu thập truy cập URL đó } |

In the above code, I used package colly to crawl data, and already explained in each line, it’s really not too difficult, if you want to see more details about data extraction please refer to the doc at the page. master colly: http://go-colly.org/

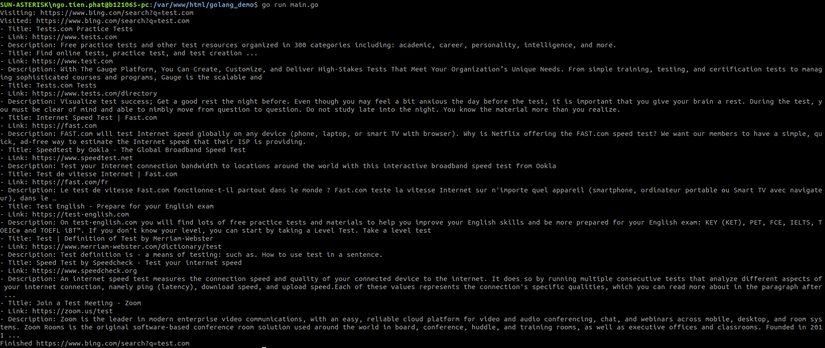

Run the command to execute:

1 2 | go run main.go |

And here are the crawl results:

IV. Initialize DB

After getting data from bing, I will initialize the database to manipulate the DB.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | import ( "fmt" "log" "github.com/gocolly/colly" "database/sql" //Thao tác với SQL "time" //Xử lý thời gian _ "github.com/go-sql-driver/mysql" //Tạo driver kết nối mysql ) func dbConnection() (*sql.DB, error) { db, err := sql.Open("mysql", "root: <a class="__cf_email__" href="/cdn-cgi/l/email-protection">[email protected]</a> (127.0.0.1:3306)/golang_demo") if err != nil { log.Printf("Error %s when opening DBn", err) return nil, err } db.SetMaxOpenConns(20) db.SetMaxIdleConns(20) db.SetConnMaxLifetime(time.Minute * 5) return db, nil } |

Here I have modified the import, added 3 packages and 1 function to initialize database connection. So, the initialization of the database connection, I see quite similar to nodeJs, just a little different in error handling, variable declaration.

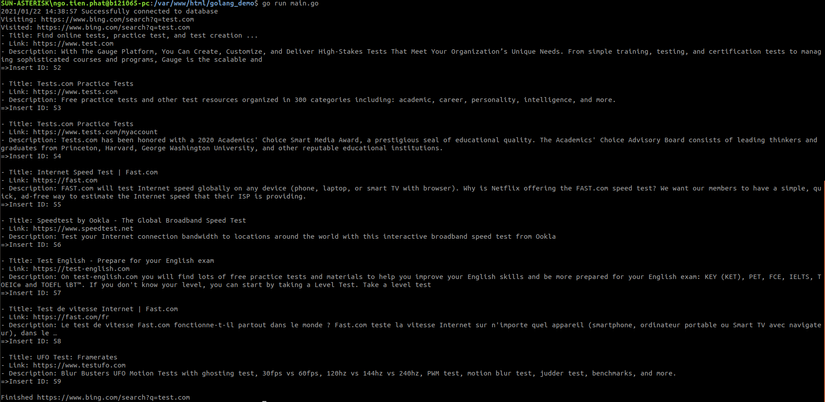

V. Insert database

Next, I will insert the crawled data into the database.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 | func main() { db, err := dbConnection() //Khởi tạo biến conection if err != nil { //Catch error trong quá trình thực thi log.Printf("Error %s when getting db connection", err) return } defer db.Close() log.Printf("Successfully connected to database") crawl(db) //Thực thi craw } func crawl(db *sql.DB) { //Truyền thêm biến db với kiểu sql.DB vào function //Các nội dung cũ vẫn giữ nguyên c.OnHTML(".b_algo", func(e *colly.HTMLElement) { data := Data{} data.title = e.ChildText("h2") data.link = e.ChildText(".b_caption cite") data.description = e.ChildText(".b_caption p") fmt.Printf("- Title: %sn- Link: %sn- Description: %sn", data.title, data.link, data.description) stmt, err := db.Prepare("INSERT bing_data SET title=?,link=?,description=?") //Prepare SQL cho việc insert checkErr(err) //Handle error res, err := stmt.Exec(data.title, data.link, data.description) //Binding data vào câu query checkErr(err) //Handle error lastId, err := res.LastInsertId() //Lấy ra ID vừa được insert if err != nil { log.Fatal(err) } fmt.Printf("=>Insert ID: %dnn", lastId) //In ra màn hình ID vừa insert }) } func checkErr(err error) { //Thêm function để handle error if err != nil { panic(err) } } |

Execute the main.go file main.go to check the results:

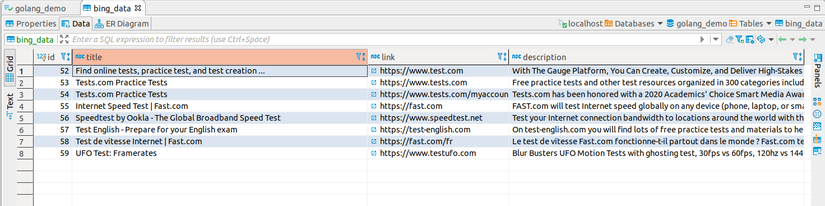

Check in the database:

Done!

BECAUSE. Conclude

So I have completed the crawling and inserting process into the database and then, with my limited knowledge and what I have learned, this is my first share about Golang .

Hopefully this article will help you understand and know a little more about craw data and insert data with golang .

Personally, I think golang executes really fast, writing style is not too fussy, and creating a project is also very fastttt. Let’s get started and try the code!

Thank you!