Introduce

This article introduces how to deploy infrastructure on AWS automatically with Terraform and integrate several security approaches to your system.

You can refer to the repository here

Model deployment

Infrastructure architecture

In this topic we implement a multi-layer web model and install WordPress to test services.

* Network layer : VPC, Internet Gateway, Security Groups rules,…

* Database layer : Amazon RDS Mysql or Amazon Aurora MySql, AWS Secrets Manager, AWS Backup,…

* Application layer : EC2, EFS, AutoScaling Group, Application Load Balancer, ElastiCache Memcached, S3, Cloudfront,…

Note : Chúng tôi không sử dụng Private subnet để tối ưu một phần chi phí, thay vào đó cấu hình các Security groups's rules để kiểm soát traffic đến các tài nguyên

Deploy on Git

With source code management on Github or Gitlab or Bitbucket,… , we need CI/CD tools, automatic error checking so that the code integration and deployment process ensures code commits between contributors without conflict. together.

Note: The default values for resources are in the file /main/variables.tf

Workflow

Checkov

Checkov is a tool that automatically checks configurations of IaC files before they are deployed, it scans IaC files for nonconformities related to security or standards compliance. Checkov supports IaaC file types like Terraform, CloudFormation, Docker, Kubernetes,… . Checkov’s policy can be predefined or custom.

In this system, Checkov is set up as follows:

.github/workflow/release.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | name: Release on: push jobs: checkov-job: runs-on: ubuntu-latest name: Release steps: - name: Checkout repo uses: actions/checkout@master - name: Run Checkov action id: checkov uses: bridgecrewio/checkov-action@v12.1347.0 with: directory: ./main # file: example/tfplan.json # optional: provide the path for resource to be scanned. This will override the directory if both are provided. # check: CKV_AWS_1 # optional: run only a specific check_id. can be comma separated list # skip_check: CKV_AWS_2 # optional: skip a specific check_id. can be comma separated list quiet: true # optional: display only failed checks soft_fail: true # optional: do not return an error code if there are failed checks framework: terraform # optional: run only on a specific infrastructure {cloudformation,terraform,kubernetes,all} output_format: github_failed_only # optional: the output format, one of: cli, json, junitxml, github_failed_only, or sarif. Default: sarif download_external_modules: true # optional: download external terraform modules from public git repositories and terraform registry log_level: WARNING # optional: set log level. Default WARNING # external_checks_dirs: ../Modules/S3, ../Modules/Database, ../Modules # config_file: path/this_file # baseline: ./checkov-report/.checkov.baseline # optional: Path to a generated baseline file. Will only report results not in the baseline. container_user: 1000 # optional: Define what UID and / or what GID to run the container under to prevent permission issue - name: Render terraform docs and push changes back to PR uses: terraform-docs/gh-actions@main with: working-dir: ./main output-file: README.md output-method: inject git-push: "true" |

Checkov will check if the generated resources meet the security requirements, it will also give recommendations for developers to do:

Cost Estimate

Along with deploying resources, managing deployment and operating costs is also an issue that needs to be controlled. Automated cost estimation with resources generated by Terraform using infracost.io right on Pull request, this makes it easy for the team to analyze costs before making changes.

.github/workflow/infra-cost.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 | # The GitHub Actions docs (https://docs.github.com/en/actions/reference/workflow-syntax-for-github-actions#on) # describe other options for 'on', 'pull_request' is a good default. on: [pull_request] # env: # If you use private modules you'll need this env variable to use # the same ssh-agent socket value across all jobs & steps. # SSH_AUTH_SOCK: /tmp/ssh_agent.sock jobs: infracost: name: Infracost runs-on: ubuntu-latest permissions: contents: read pull-requests: write env: TF_ROOT: ./main # This instructs the CLI to send cost estimates to Infracost Cloud. Our SaaS product # complements the open source CLI by giving teams advanced visibility and controls. # The cost estimates are transmitted in JSON format and do not contain any cloud # credentials or secrets (see https://infracost.io/docs/faq/ for more information). INFRACOST_ENABLE_CLOUD: true # If you're using Terraform Cloud/Enterprise and have variables or private modules stored # on there, specify the following to automatically retrieve the variables: # INFRACOST_TERRAFORM_CLOUD_TOKEN: ${{ secrets.TFC_TOKEN }} # INFRACOST_TERRAFORM_CLOUD_HOST: app.terraform.io # Change this if you're using Terraform Enterprise steps: # If you use private modules, add an environment variable or secret # called GIT_SSH_KEY with your private key, so Infracost can access # private repositories (similar to how Terraform/Terragrunt does). # - name: add GIT_SSH_KEY # run: | # ssh-agent -a $SSH_AUTH_SOCK # mkdir -p ~/.ssh # echo "${{ secrets.GIT_SSH_KEY }}" | tr -d 'r' | ssh-add - # ssh-keyscan github.com >> ~/.ssh/known_hosts - name: Setup Infracost uses: infracost/actions/setup@v2 # See https://github.com/infracost/actions/tree/master/setup for other inputs # If you can't use this action, see Docker images in https://infracost.io/cicd with: api-key: ${{ secrets.INFRACOST_API_KEY }} # Checkout the base branch of the pull request (e.g. main/master). - name: Checkout base branch uses: actions/checkout@v2 with: ref: '${{ github.event.pull_request.base.ref }}' # Generate Infracost JSON file as the baseline. - name: Generate Infracost cost estimate baseline run: | infracost breakdown --path=${TF_ROOT} --format=json --out-file=/tmp/infracost-base.json # Checkout the current PR branch so we can create a diff. - name: Checkout PR branch uses: actions/checkout@v2 # Generate an Infracost diff and save it to a JSON file. - name: Generate Infracost diff run: | infracost diff --path=${TF_ROOT} --format=json --compare-to=/tmp/infracost-base.json --out-file=/tmp/infracost.json # Posts a comment to the PR using the 'update' behavior. # This creates a single comment and updates it. The "quietest" option. # The other valid behaviors are: # delete-and-new - Delete previous comments and create a new one. # hide-and-new - Minimize previous comments and create a new one. # new - Create a new cost estimate comment on every push. # See https://www.infracost.io/docs/features/cli_commands/#comment-on-pull-requests for other options. - name: Post Infracost comment run: | infracost comment github --path=/tmp/infracost.json --repo=$GITHUB_REPOSITORY --github-token=${{github.token}} --pull-request=${{github.event.pull_request.number}} --behavior=update |

Atlantis

Atlantis is an open source tool integrated with Terraform that allows to run terraform plan and terraform apply commands directly from a Pull request. By commenting on the Pull request, a trigger will be sent to the http endpoint of Atlantis, and after executing the plan or applying it will return the Output result right on the comment of the Pull request. From there we can review the code in Terraform before merging into the Master branch.

Note : Vì lý do bảo mật nên trong repository này sẽ không có Atlantis.

Without Atlantis, our workflow might look like this:

Workflow with Atlantis integration:

As soon as a change is made, the generated Pull request will send a trigger to Atlantis to run the plan, or comment atlantis plan :

After the code review is OK and the Pull request is approved, comment atlantis apply to send a trigger to Atlantis and apply to the system:

Atlantis is very effective when working in teams, by managing the source code on Git, Pull requests created by team members will be reviewed or planned by the team leader to decide whether to merge or not right on that Pull request. To deploy an Atlantis server with Git, please refer here

The workflow for Atlantis in this system is defined as follows:

atlantis/repos.yaml

1 2 3 4 5 6 | repos: - id: /.*/ allowed_overrides: [workflow] allow_custom_workflows: true apply_requirements: [approved] |

/atlantis.yaml

1 2 3 4 5 6 7 8 9 10 | version: 3 projects: - name: MAIN dir: main autoplan: when_modified: - "*.tf" - "../Modules/**/*.tf" apply_requirements: [approved] |

Make infrastructure more secure

Security Groups

Traffic to resources is controlled by rules in security groups. For EC2, only traffic coming from the Application Load Balancer is allowed and for Database, only traffic coming from EC2.

Create security group for EC2 (/modules/networking/main.tf)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | module "ec2_sg" { source = "terraform-aws-modules/security-group/aws" version = "4.9.0" name = "${var.sg_prefix}-ec2-sg" description = "Security group for EC2 instance in Auto Scaling" vpc_id = module.vpc.vpc_id ingress_cidr_blocks = var.ssh_whitelist ingress_rules = ["ssh-tcp"] ingress_with_source_security_group_id = [ { rule = "http-80-tcp" source_security_group_id = module.alb_sg.security_group_id description = "Allow HTTP from Load Balancer to EC2 instance" } ] egress_cidr_blocks = ["0.0.0.0/0"] egress_rules = ["all-all"] } |

Create security group for database (/modules/networking/main.tf)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 | module "db_sg" { source = "terraform-aws-modules/security-group/aws" version = "4.9.0" name = "${var.sg_prefix}-db-sg" description = "Security group for DB instance/cluster" vpc_id = module.vpc.vpc_id ingress_with_source_security_group_id = [ { rule = "mysql-tcp" source_security_group_id = module.ec2_sg.security_group_id description = "Allow connection from EC2 instance to DB" } ] egress_cidr_blocks = ["0.0.0.0/0"] egress_rules = ["all-all"] } |

AWS Secrets Manager

AWS Secrets Manager helps you to store and secure credentials accessing applications or services. Users and applications access the credentials with a call to the Secrets Manager API. It also has the ability to automatically or integrate with Lambda to rotate credentials periodically, making resources more secure when using sensitive credentials.

In this system, we use AWS Secrets Manager to store the Database’s login information, and then every 30 days the Database’s password will be changed through Lambda.

Save Database credentials in AWS Secrets Manager (/modules/database/db-rotate.tf)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 | resource "aws_secretsmanager_secret" "rds_master_credentials" { name = "${var.db_identifier}-rds-master-credentials" recovery_window_in_days = 0 # kms_key_id = data.aws_kms_key.this.arn } resource "aws_secretsmanager_secret_version" "rds_master_credentials_version" { depends_on = [ aws_secretsmanager_secret.rds_master_credentials ] secret_id = aws_secretsmanager_secret.rds_master_credentials.id secret_string = <<EOF { "engine": "mysql", "username": "${var.master_username}", "password": "${var.master_password}", "host": "${local.db_enpoint}", "port": 3306, "dbname": "${var.db_name}" } EOF } |

Automatically rotate passwords (/modules/database/db-rotate.tf)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 | resource "aws_lambda_function" "rds_password_rotation" { # If the file is not in the current working directory you will need to include a # path.module in the filename. filename = "${path.module}/resources/rds_password_rotation/lambda_function.zip" function_name = "${var.db_identifier}-lambda-rds-password-rotation" role = aws_iam_role.lambda_rotation_role.arn handler = "lambda_function.lambda_handler" # The filebase64sha256() function is available in Terraform 0.11.12 and later # For Terraform 0.11.11 and earlier, use the base64sha256() function and the file() function: # source_code_hash = "${base64sha256(file("lambda_function_payload.zip"))}" source_code_hash = filebase64sha256("${path.module}/resources/rds_password_rotation/lambda_function.zip") runtime = "python3.7" vpc_config { subnet_ids = var.subnet_ids_group security_group_ids = flatten([ aws_security_group.lambda_rotation_sg.id, var.vpc_security_group_ids ]) } # kms_key_arn = data.aws_kms_key.this.arn environment { variables = { SECRETS_MANAGER_ENDPOINT = "https://secretsmanager.ap-southeast-1.amazonaws.com" } } } resource "aws_lambda_permission" "allow_secret_manager_rotate_postgre_password" { function_name = aws_lambda_function.rds_password_rotation.function_name statement_id = "AllowExecutionSecretManager" action = "lambda:InvokeFunction" principal = "secretsmanager.amazonaws.com" } resource "aws_secretsmanager_secret_rotation" "password_rotation" { secret_id = aws_secretsmanager_secret.rds_master_credentials.id rotation_lambda_arn = aws_lambda_function.rds_password_rotation.arn rotation_rules { automatically_after_days = var.db_password_rotation_days } depends_on = [ aws_rds_cluster.this, aws_rds_cluster_instance.this, aws_db_instance.this, aws_secretsmanager_secret_version.rds_master_credentials_version ] } |

Password rotate cycle (/main/variables.tf)

1 2 3 4 5 6 | variable "db_password_rotation_days" { type = number description = "Password RDS rotation day" default = 30 } |

Local Deployment

Similar to Git deployment but without workflow, suitable for self-managing source code.

Request

To deploy an infrastructure on AWS with Terraform, you need to install the AWS CLI and Terraform.

Implementation steps

- Download the source code on Github

- Open Terminal and cd to the downloaded folder

cd ./main - Configure new infrastructure with

terraform init, this step is required so that resources from provider will be downloaded when we write inmain.tffile - Check before applying :

terraform plan - Apply configuration :

terraform apply -auto-approve - To destroy infrastructure, use

terraform destroy -auto-approve

Check the result

- After applying, Outputs will output the corresponding dns. Access to wordpress webserver with dns = lb_dns_name

- To login to the Admin site, add /wp-admin after the website’s path

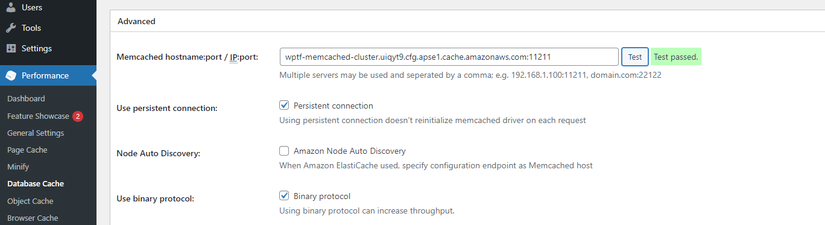

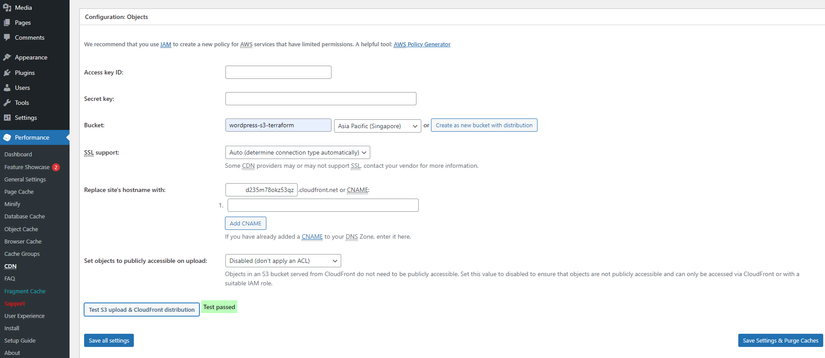

- To use CDN and Memcached, in the Plugins section, activate W3 Total Cache

- Check Database Cache with Memcached

![]()

- Test CDN with Cloudfront

![]()

Note

The default values are located in the file /main/variables.tf . You can change these values depending on the purpose of use.